SAP HANA同可用区高可用部署

本文档介绍了如何在公共云环境同可用区部署SAP HANA的高可用环境。

背景信息

镜像版本支持SLES for SAP 11/12/15。

名词解释

VPC

专有网络VPC(Virtual Private Cloud)是基于阿里云构建的一个隔离的网络环境,专有网络之间逻辑上彻底隔离。专有网络是您自己独有的云上私有网络。

ECS

云服务器ECS(Elastic Compute Service)是阿里云提供的性能卓越、稳定可靠、 弹性扩展的IaaS(Infrastructure as a Service)级别云计算服务。

ENI

弹性网卡ENI(Elastic Network Interface)是一种可以附加到专有网络VPC类型 ECS实例上的虚拟网卡,通过弹性网卡,您可以实现高可用集群搭建、低成本故障转移和精细化的网络管理。

HAVIP

高可用虚拟IP HAVIP(Private High-Availability Virtual IP Address),是一种可以独立创建和释放的私网IP资源。这种私网IP的特殊之处在于,用户可以在ECS上使用ARP协议进行该IP的宣告。

共享块存储

共享块存储是一种支持多台ECS实例并发读写访问的数据块级存储设备,具备多并发、高性能、高可靠等特性。常用于高可用架构数据库集群Oracle RAC(Real Application Cluster)以及高可用架构服务器集群(High-availability cluster)的场景。

地域

地域(Region)是指物理的数据中心。资源创建成功后不能更换地域。

可用区

可用区(Zone)是指在同一地域内,电力和网络互相独立的物理区域。同一可用区内实例之间的网络延时更小。

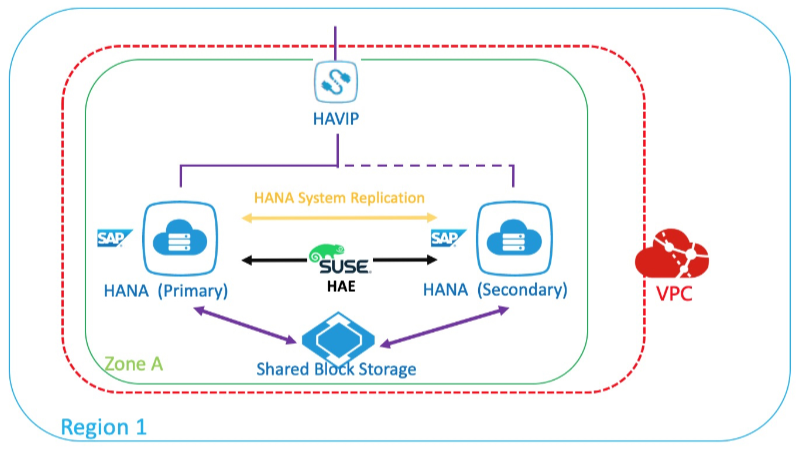

架构介绍

阿里公共云支持SAP HANA在同可用区的高可用部署,通过配置SAP HANA的System Replication功能,结合Suse HAE实现自动切换。

准备工作

SAP安装介质准备

工具

访问方式

备注

Windows跳板机

在跳板机上安装SAP Download Manager下载介质上传到OSS或直接挂载到ECS

跳板机需使用EIP或NAT具备公网访问能力

OSS工具

将本机介质通过OSS工具(如ossutil)上传到客户的 oss bucket

无

网络规划

网络

地域

用途

子网

业务网

华东2 可用区F

For Business/SR

192.168.10.0/24

心跳网

华东2 可用区F

For HA

192.168.20.0/24

主机规划

主机名

角色

心跳地址

业务地址

高可用虚拟IP(HAVIP)

saphana-01

HANA主节点

192.168.20.19

192.168.10.168

192.168.10.12

saphana-02

HANA备节点

192.168.20.20

192.168.10.169

文件系统规划

类型

大小

文件系统

VG

LVM条带

挂载点

数据盘

800G

XFS

datavg

是

/hana/data

数据盘

400G

XFS

datavg

是

/hana/log

数据盘

300G

XFS

datavg

是

/hana/shared

数据盘

50G

XFS

sapvg

是

/usr/sap

云资源配置

创建VPC和ECS

使用ECS之前,您需要先创建VPC和Vswitch。请根据实际情况创建所需的VPC和 Vswitch。

登录阿里云控制台https://vpc.console.aliyun.com/ 。

按规划在上海可用区F,创建 192.168.0.0/16的网段和192.168.10.0/24(业务)、192.168.20.0/24(心跳)这两个子网。

登录阿里云控制台https://ecs.console.aliyun.com/ 。

按规划创建两台HANA ECS。

创建其他云资源

在部署SAP同可用区高可用环境前,您需要先创建共享块存储和高可用虚拟IP。

共享块存储作为高可用集群的STNOITH设备,用来fence故障节点。

高可用虚拟IP作为集群中的虚拟IP挂载到集群中的活动主节点,本示例作为HANA实例对外提供服务的虚拟IP地址。

登录阿里云控制台,云服务器>存储与快照>共享块存储,创建共享块存储;在 ECS同地域同可用区下,创建一个20G SSD共享块存储。

创建完后分别挂载到集群中两台ECS实例。

登录阿里云控制台,云服务器>网络与安全>专有网络VPC>高可用虚拟IP,创建高可用虚拟IP;按规划这里创建192.168.10.12,并挂载到刚才新建的两台HANA ECS。

HANA ECS配置

主机名及DNS解析

分别在HA 集群两台HANA 服务器上,实现两台HANA ECS之间的主机名称解析。

本示例的/etc/hosts:

127.0.0.1 localhost 192.168.10.168 saphana-01 saphana-01 192.168.10.169 saphana-02 saphana-02 192.168.20.19 hana-ha01 hana-ha01 192.168.20.20 hana-ha02 hana-ha02ECS SSH互信

HA集群的两台HANA ECS的需要配置SSH互信。

配置认证公钥

在HANA主节点执行如下命令:

saphana-01:~ # ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): 直接回车 Enter passphrase (empty for no passphrase): 直接回车 Enter same passphrase again: 直接回车 Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:6lX54zFixfUF7Ni+yEn8+lzBjj4XSF4QoVjznKNx15M root@saphana-01 The key's randomart image is: +---[RSA 2048]----+ | o ++ | | o =.o.o| | . o XoEo| | o=o*oo| | S oo=.oo.| | . . ooo+..| | . . oo== oo| | . . . o=*oo | | . o+= | +----[SHA256]-----+ saphana-01:~ # ssh-copy-id -i /root/.ssh/id_rsa.pub root@192.168.10.169 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub" The authenticity of host '192.168.10.169 (192.168.10.169)' can't be established. ECDSA key fingerprint is SHA256:iD5aepnspZcREGbGJpExnMd3YGXPM8FcmSq66KLCgsk. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys Password: 输入备节点root密码 Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@192.168.10.169'" and check to make sure that only the key(s) you wanted were added. saphana-01:~ #在hana备节点上执行如下命令:

saphana-02:~ # ssh-keygen -t rsa Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:116JLe/MTR494dejsZkgrtvfFdL6+WwGnmcc9QD38Zc root@saphana-02 The key's randomart image is: +---[RSA 2048]----+ | | | . .. | | o .+| | . ooE=| | S . +.+=+| | . . +=.*| | . ..+.X*| | o . o+@=%| | ooo.. *+B*| +----[SHA256]-----+ saphana-02:~ # ssh-copy-id -i /root/.ssh/id_rsa.pub root@192.168.10.169 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub" The authenticity of host '192.168.10.169 (192.168.10.169)' can't be established. ECDSA key fingerprint is SHA256:iD5aepnspZcREGbGJpExnMd3YGXPM8FcmSq66KLCgsk. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys Password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'root@192.168.10.169'" and check to make sure that only the key(s) you wanted were added.配置验证结果

分别在两个节点上,使用 SSH 登录另外一个节点,如果不需要密码登录,则说明互信已经建立。

在hana主节点,进行验证:

saphana-01:~ # ssh saphana-02 Last login: Mon Apr 22 23:36:01 2019 from 192.168.10.168 Welcome to Alibaba Cloud Elastic Compute Service ! saphana-02:~ #在hana备节点,进行验证:

saphana-02:~ # ssh saphana-01 Last login: Mon Apr 22 23:36:21 2019 from 192.168.10.169 Welcome to Alibaba Cloud Elastic Compute Service ! saphana-01:~ #

部署ECS Metrics Collector for SAP监控代理

ECS Metrics Collector监控代理程序,用于云平台上SAP系统收集需要的虚拟机配置信息和底层物理资源使用相关的信息,供日后做性能统计和问题分析使用。 每台SAP应用和数据库都需要安装Metrics Collector,监控代理的部署请参考ECS Metrics Collector for SAP部署指南 。

文件系统划分

按前面的文件系统规划,用LVM来管理和配置云盘(集群两个节点)。

有关LVM分区的介绍,请参考LVM HOWTO。

创建PV和VG

# pvcreate /dev/vdb /dev/vdc /dev/vdd /dev/vdg Physical volume "/dev/vdb" successfully created Physical volume "/dev/vdc" successfully created Physical volume "/dev/vdd" successfully created Physical volume "/dev/vdg" successfully created # vgcreate hanavg /dev/vdb /dev/vdc /dev/vdd Volume group "hanavg" successfully created # vgcreate sapvg /dev/vdg Volume group "sapvg" successfully created创建LV

# lvcreate -l 100%FREE -n usrsaplv sapvg Logical volume "usrsaplv" created. 将三块500G的SSD云盘配置条带化 # lvcreate -L 800G -n datalv -i 3 -I 64 hanavg Rounding size (204800 extents) up to stripe boundary size (204801 extents). Logical volume "datalv" created. # lvcreate -L 400G -n loglv -i 3 -I 64 hanavg Rounding size (102400 extents) up to stripe boundary size (102402 extents). Logical volume "loglv" created. # lvcreate -l 100%FREE -n sharedlv -i 3 -I 64 hanavg Rounding size (38395 extents) down to stripe boundary size (38394 extents) Logical volume "sharedlv" created.创建挂载点并格式化文件系统

# mkdir -p /usr/sap /hana/data /hana/log /hana/shared # mkfs.xfs /dev/sapvg/usrsaplv # mkfs.xfs /dev/hanavg/datalv # mkfs.xfs /dev/hanavg/loglv # mkfs.xfs /dev/hanavg/sharedlv挂载文件系统并加到开机自启动项

# vim /etc/fstab 添加下列项: /dev/mapper/hanavg-datalv /hana/data xfs defaults 0 0 /dev/mapper/hanavg-loglv /hana/log xfs defaults 0 0 /dev/mapper/hanavg-sharedlv /hana/shared xfs defaults 0 0 /dev/mapper/sapvg-usrsaplv /usr/sap xfs defaults 0 0 /dev/vdf swap swap defaults 0 0 # mount -a # df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 32G 0 32G 0% /dev tmpfs 48G 55M 48G 1% /dev/shm tmpfs 32G 768K 32G 1% /run /dev/vda1 99G 30G 64G 32% / tmpfs 6.3G 16K 6.3G 1% /run/user/0 /dev/mapper/hanavg-datalv 800G 34M 800G 1% /hana/data /dev/mapper/sapvg-usrsaplv 50G 33M 50G 1% /usr/sap /dev/mapper/hanavg-loglv 400G 33M 400G 1% /hana/log /dev/mapper/hanavg-sharedlv 300G 33M 300G 1% /hana/shared

安装SAP HANA并配置HANA System replication

安装SAP HANA

HANA的主节点和备节点的System ID和Instance ID要相同。本示例的HANA的System ID为H01,Instance ID为00。

有关SAP HANA的安装请参考SAP HANA Platform。

配置HANA System Replication

SLES HAE安装与配置

安装SLES HAE软件

有关SLES HAE的文档请参考SUSE Linux Enterprise High Availability Extension 12。

在主、备节点,检查是否已经安装HAE组件和SAPHanaSR组件。

重要本示例使用的是SLES for SAP 12 SP3 CSP(Cloud Service Provider)镜像,此镜像已经预置了阿里云SUSE SMT Server配置,可直接进行组件检查和安装。如果您使用的是自定义镜像,请先购买SUSE授权以获得并注册到SUSE官方的SMT Server或者手工配置zypper repository源,才能进行后面的操作。

配置SLES HAE和安装管理SAP HANA资源,需要以下组件:

patterns-ha-ha_sles

SAPHanaSR

sap_suse_cluster_connector

patterns-sle-gnome-basic

用以下命令安装需要的组件:

zypper in patterns-ha-ha_sles SAPHanaSR sap_suse_cluster_connector配置集群

本示例使用VNC打开图形界面,在HANA主节点上配置Corosync。

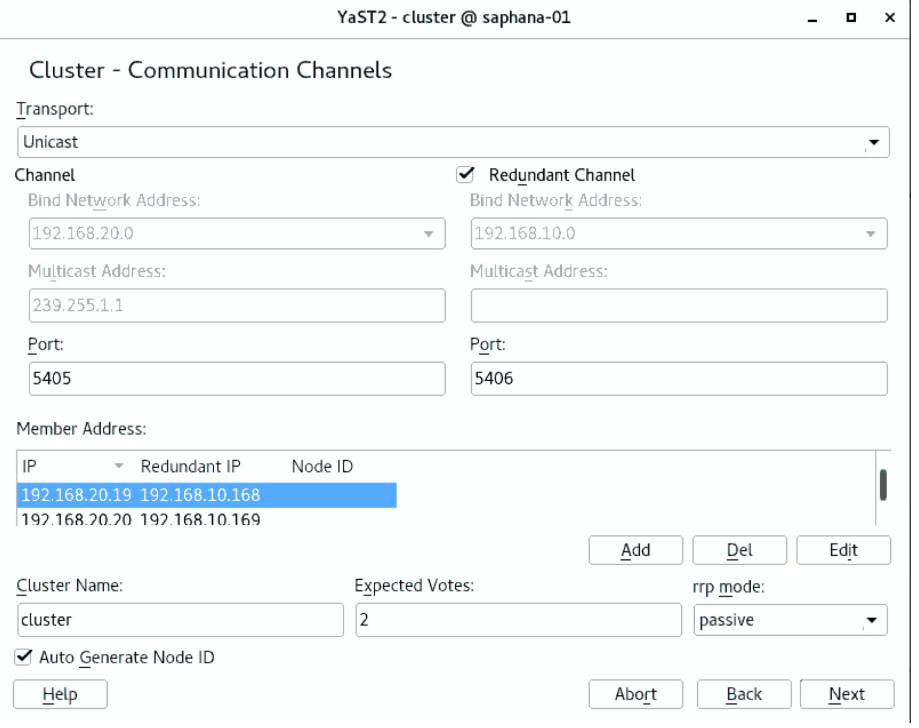

yast2 cluster配置communication channels

Channel选择心跳网段,Redundant Channel选择业务网段。

按正确的顺序依次添加Member address(前心跳地址,后业务地址)。

Excepted Votes: 2。

Transport: Unicast。

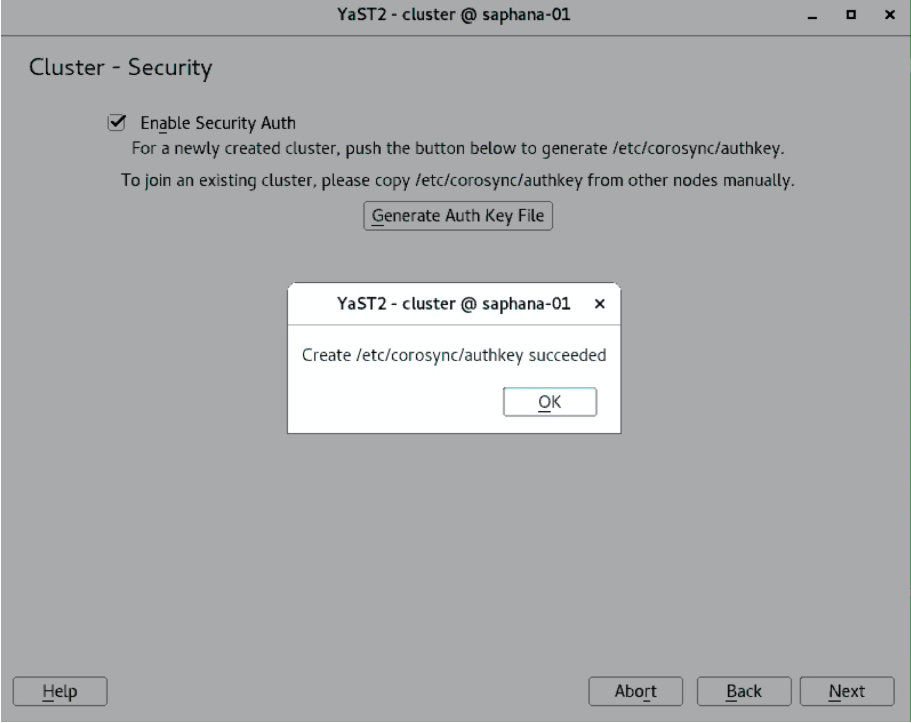

配置Security

选中Enable Security Auth,单击Generate Auth Key File。

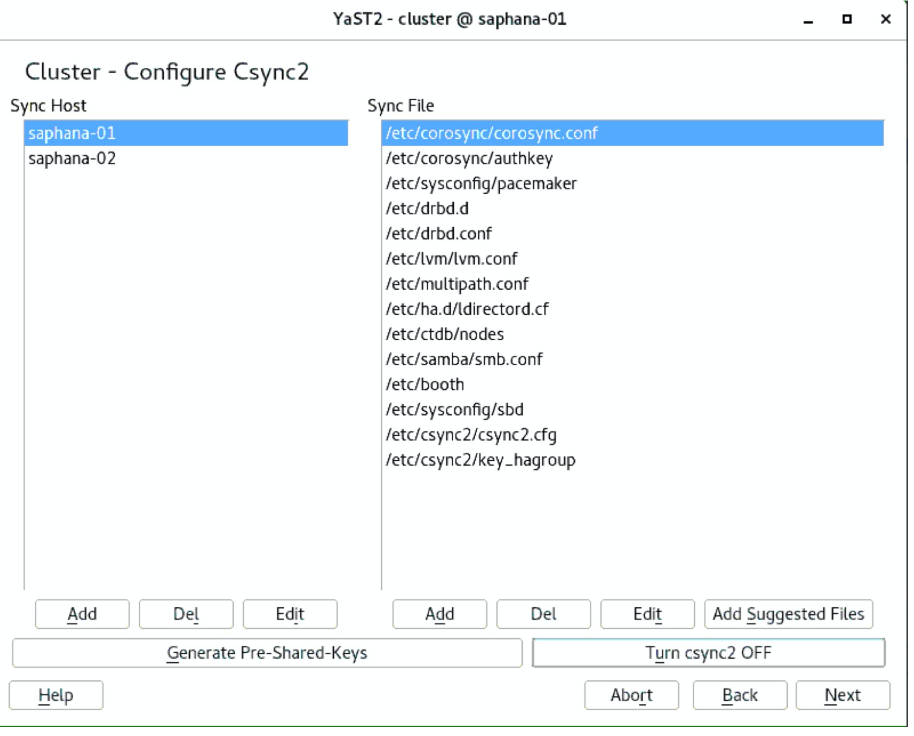

配置Csync2

添加Sync host

点击Add Suggested Files

点击Generate Pre-Shared-Keys

点击Turn csync2 ON

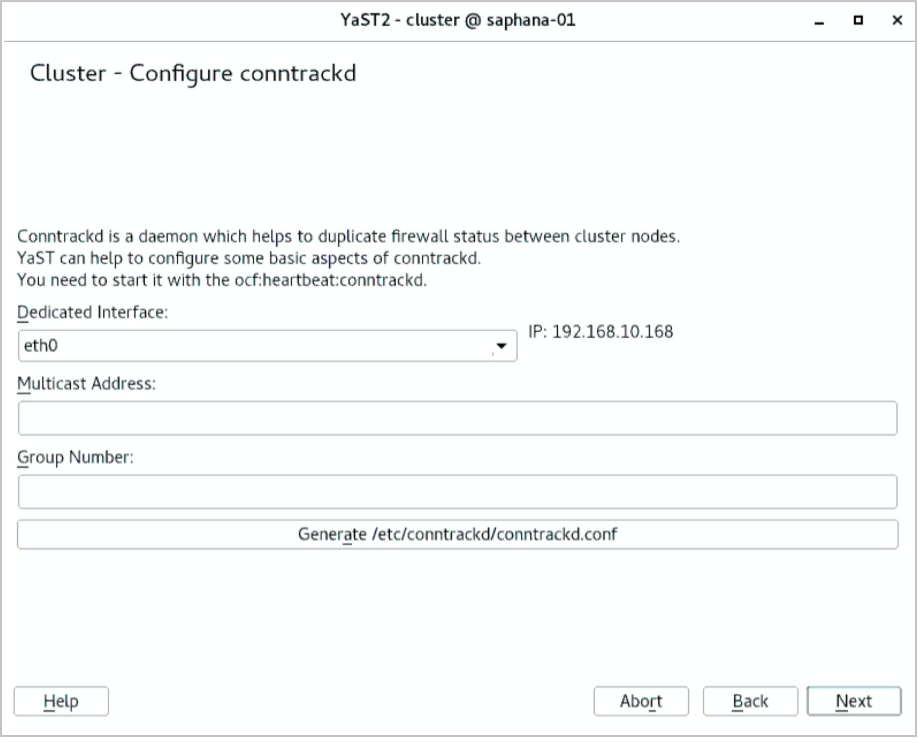

Configure conntrackd这一步使用默认,直接下一步。

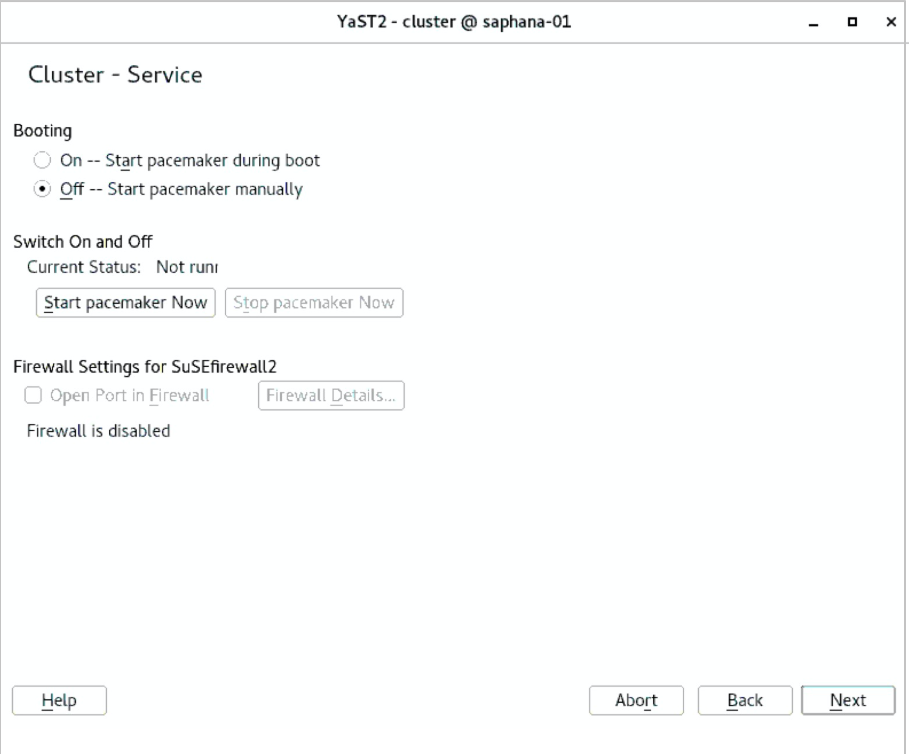

配置Service

确认Cluster服务不要设成开机自启动。

配置完成后保存退出,将Corosync配置文件复制到hana备节点,在主节点执行以下命令:

#sudo scp -pr /etc/corosync/authkey /etc/corosync/corosync.conf root@saphana- 02:/etc/corosync/

验证集群状态

在两个节点中分别执行如下命令,启动pacemaker服务。

# systemctl start pacemaker确认集群中两个节点的状态为online。

# crm_mon -r Stack: corosync Current DC: saphana-02 (version 1.1.16-4.8-77ea74d) - partition with quorum Last updated: Tue Apr 23 11:22:38 2019 Last change: Tue Apr 23 11:22:36 2019 by hacluster via crmd on saphana-02 2 nodes configured 0 resources configured Online: [ saphana-01 saphana-02 ] No resources激活hawk2的Web服务。

# passwd hacluster New password: Retype new password: passwd: password updated successfully # systemctl restart hawk.service设置hawk2服务开机自启动。

# systemctl enable hawk.service

配置SBD(仲裁盘)

请确认已经按规划将20G的共享块存储正确挂载到了两台ECS上,本示例仲裁盘为 /dev/def。

saphana-01:~ # lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

vda 253:0 0 100G 0 disk

└─vda1 253:1 0 100G 0 part /

vdb 253:16 0 500G 0 disk

vdc 253:32 0 500G 0 disk

vdd 253:48 0 500G 0 disk

vde 253:64 0 64G 0 disk

vdf 253:80 0 20G 0 disk

saphana-02:~ # lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

vda 253:0 0 100G 0 disk

└─vda1 253:1 0 100G 0 part /

vdb 253:16 0 500G 0 disk

vdc 253:32 0 500G 0 disk

vdd 253:48 0 500G 0 disk

vde 253:64 0 64G 0 disk

vdf 253:80 0 20G 0 disk配置 watchdog(集群两个节点)

# echo "modprobe softdog" > /etc/init.d/boot.local # echo "softdog" > /etc/modules-load.d/watchdog.conf # modprobe softdog **watchdog配置检查** saphana-01:~ # ls -l /dev/watchdog crw------- 1 root root 10, 130 Apr 23 12:09 /dev/watchdog saphana-01:~ # lsmod | grep -e wdt -e dog softdog 16384 0 saphana-01:~ # grep -e wdt -e dog /etc/modules-load.d/watchdog.conf softdog配置SBD(集群两个节点)

# sbd -d /dev/vdf create Initializing device /dev/vdf Creating version 2.1 header on device 4 (uuid: e3874a81-47ae-4578-b7a2-4b32bd139e07) Initializing 255 slots on device 4 Device /dev/vdf is initialized. # sbd -d /dev/vdf dump ==Dumping header on disk /dev/vdf Header version : 2.1 UUID : e3874a81-47ae-4578-b7a2-4b32bd139e07 Number of slots : 255 Sector size : 512 Timeout (watchdog) : 5 Timeout (allocate) : 2 Timeout (loop) : 1 Timeout (msgwait) : 10 ==Header on disk /dev/vdf is dumped 配置SBD参数: # vim /etc/sysconfig/sbd 修改以下参数: SBD_DEVICE="/dev/vdf" - 修改成SBD的云盘设备ID SBD_STARTMODE="clean" SBD_OPTS="-W"验证SBD服务

两个节点分别启动sbd:

#/usr/share/sbd/sbd.sh start验证SBD进程:

# ps -ef | grep sbd root 16148 1 0 14:02 pts/0 00:00:00 sbd: inquisitor root 16150 16148 0 14:02 pts/0 00:00:00 sbd: watcher: /dev/vdf - slot: 1 - uuid: 9b620112-1031-48b8-9510-8e1b77032472 root 16151 16148 0 14:02 pts/0 00:00:00 sbd: watcher: Pacemaker root 16152 16148 0 14:02 pts/0 00:00:00 sbd: watcher: Cluster root 16162 15254 0 14:05 pts/0 00:00:00 grep --color=auto sbd 检查SBD状态 #sbd -d /dev/vdf list确保两个节点的状态为clear:

# /usr/bin # sbd -d /dev/vdf list 0 saphana-01 clear 1 saphana-02 clearSBD fence验证:

说明请确保被fence的节点重要的服务进程已关闭。

本示例,登录主节点saphana01,准备fence掉备节点saphana02:

saphana-01 # sbd -d /dev/vdf message saphana-02 reset如果备节点saphana-02正常重启,表示SBD盘配置成功。

SAP HANA与SLES HAE集成

使用SAPHanaSR配置SAP HANA资源

在任意集群节点,新建脚本⽂件,替换脚本中的HANA SID、Instance Number和HAVIP三个参数。本示例中,HANA SID:H01,Instance Number:00,HAVIP:192.168.10.12,脚本⽂件名HANA_HA_script.sh。

###SAP HANA Topology is a resource agent that monitors and analyze the HANA landscape and communicate the status between two nodes## primitive rsc_SAPHanaTopology_HDB ocf:suse:SAPHanaTopology \ operations $id=rsc_SAPHanaTopology_HDB-operations \ op monitor interval=10 timeout=600 \ op start interval=0 timeout=600 \ op stop interval=0 timeout=300 \ params SID=H01 InstanceNumber=00 ###This file defines the resources in the cluster together with the Virtual IP### primitive rsc_SAPHana_HDB ocf:suse:SAPHana \ operations $id=rsc_SAPHana_HDB-operations \ op start interval=0 timeout=3600 \ op stop interval=0 timeout=3600 \ op promote interval=0 timeout=3600 \ op monitor interval=60 role=Master timeout=700 \ op monitor interval=61 role=Slave timeout=700 \ params SID=H01 InstanceNumber=00 PREFER_SITE_TAKEOVER=true DUPLICATE_PRIMARY_TIMEOUT=7200 AUTOMATED_REGISTER=false #This is for sbd setting## primitive rsc_sbd stonith:external/sbd \ op monitor interval=20 timeout=15 \ meta target-role=Started maintenance=false #This is for VIP resource setting## primitive rsc_vip IPaddr2 \ operations $id=rsc_vip-operations \ op monitor interval=10s timeout=20s \ params ip=192.168.10.12 ms msl_SAPHana_HDB rsc_SAPHana_HDB \ meta is-managed=true notify=true clone-max=2 clone-node-max=1 target- role=Started interleave=true maintenance=false clone cln_SAPHanaTopology_HDB rsc_SAPHanaTopology_HDB \ meta is-managed=true clone-node-max=1 target-role=Started interleave=true maintenance=false colocation col_saphana_ip_HDB 2000: rsc_vip:Started msl_SAPHana_HDB:Master order ord_SAPHana_HDB 2000: cln_SAPHanaTopology_HDB msl_SAPHana_HDB property cib-bootstrap-options: \ have-watchdog=true \ cluster-infrastructure=corosync \ cluster-name=cluster \ no-quorum-policy=ignore \ stonith-enabled=true \ stonith-action=reboot \ stonith-timeout=150s op_defaults op-options: \ timeout=600 \ record-pending=true运行以下命令使HAE接管SAP HANA:

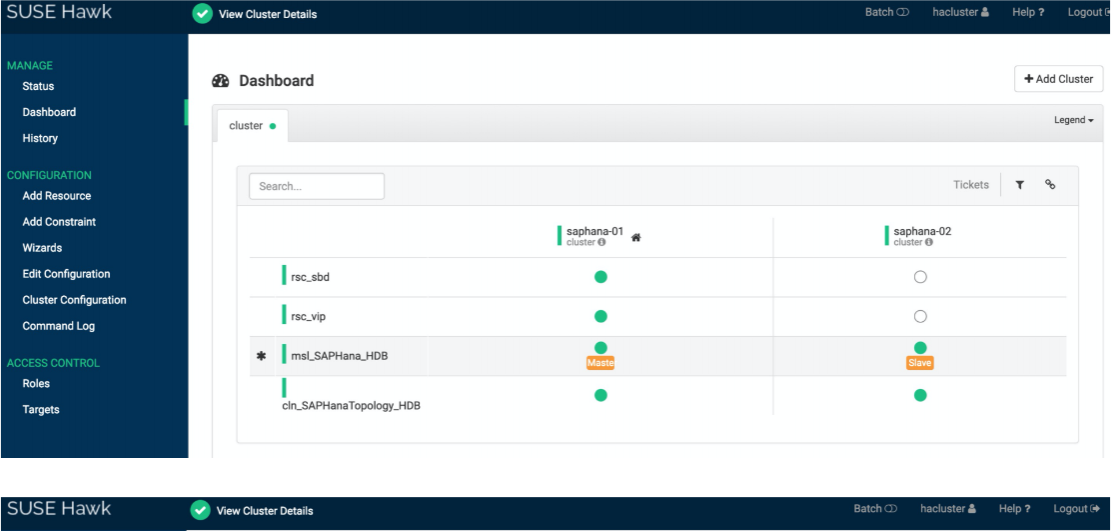

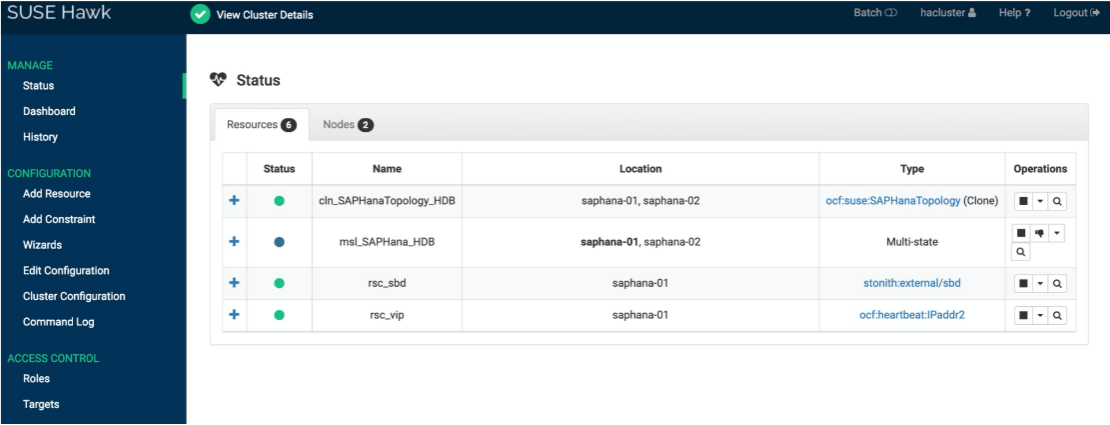

crm configure load update HANA_HA_script.sh验证集群状态

正常的集群资源状态:

sbd和vip资源在当前的主节点。

SAPHana_HDB资源,在master和slave节点分别为绿色。

SAPHanaTopolopy资源,在master和slave节点同时为绿色。

可以通过crmsh或hawk图形化界面来管理和配置HAE资源。

通过Hawk web管理

登录 https://<ECS IP address>:7630

通过crmsh管理

# crm_mon -r Stack: corosync Current DC: saphana-01 (version 1.1.16-4.8-77ea74d) - partition with quorum Last updated: Wed Apr 24 11:48:38 2019 Last change: Wed Apr 24 11:48:35 2019 by root via crm_attribute on saphana-01 2 nodes configured 6 resources configured Online: [ saphana-01 saphana-02 ] Full list of resources: rsc_sbd (stonith:external/sbd): Started saphana-01 rsc_vip (ocf::heartbeat:IPaddr2): Started saphana-01 Master/Slave Set: msl_SAPHana_HDB [rsc_SAPHana_HDB] Masters: [ saphana-01 ] Slaves: [ saphana-02 ] Clone Set: cln_SAPHanaTopology_HDB [rsc_SAPHanaTopology_HDB] Started: [ saphana-01 saphana-02 ]

关联文档

有关SAP系统高可用环境维护请参考SAP 高可用环境维护指南。