2车2无人机 无人机扫描二维码跟车

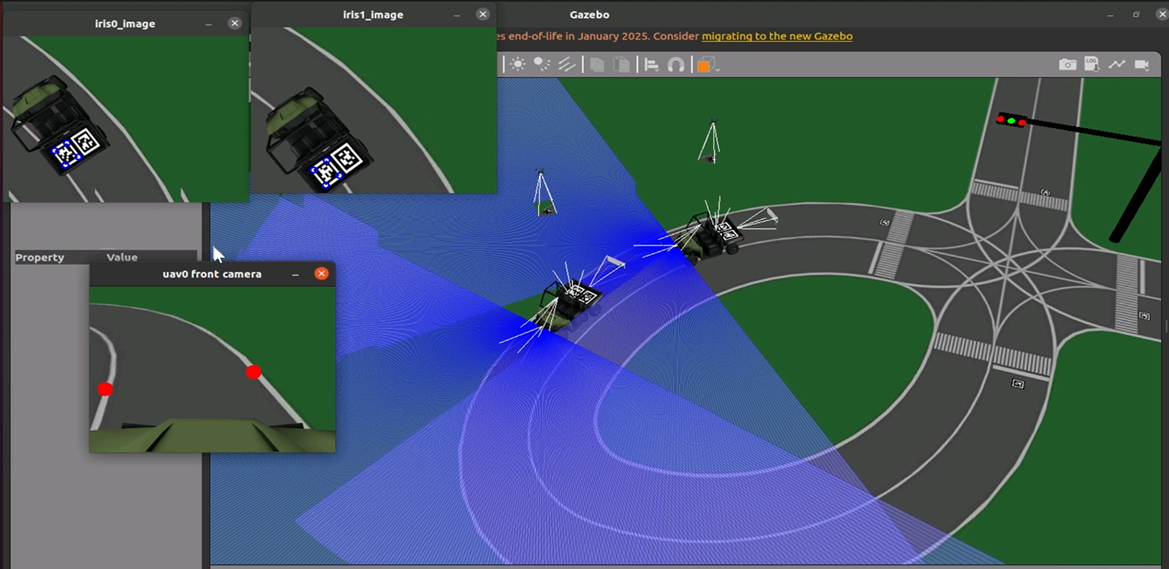

本实验通过生成两辆车以及两架无人机,前方车辆巡线行驶,后方车辆跟随前车,无人机读取车辆后方二维码并跟车飞行

场景简介

本实验介绍通过生成两辆车以及两架无人机,前方车辆巡线行驶,后方车辆跟随前车,无人机读取车辆后方二维码并跟车飞行

实验室资源方式简介

进入实操前,请确保阿里云账号满足以下条件:

个人账号资源

使用您个人的云资源进行操作,资源归属于个人。

平台仅提供手册参考,不会对资源做任何操作。

确保已完成云工开物300元代金券领取。

已通过实名认证且账户余额≥100元。

本次实验将在您的账号下开通实操所需计算型实例规格族c7a,费用约为:3元/时(具体金额取决于实验完成的时间),需要您通过阿里云云工开物学生专属300元抵扣金兑换本次实操的云资源。

如果您调整了资源规格、使用时长,或执行了本方案以外的操作,可能导致费用发生变化,请以控制台显示的实际价格和最终账单为准。

领取专属权益及创建实验资源

在开始实验之前,请先点击右侧屏幕的“进入实操”再进行后续操作

本次实验需要您通过领取阿里云云工开物学生专属300元抵扣券兑换本次实操的云资源,如未领取请先点击领取。(若已领取请跳过)

实验产生的费用优先使用优惠券,优惠券使用完毕后需您自行承担。

实验步骤

1、服务部署

点击链接,进入部署页面

按下图设置【服务实例名称】、【地域】、【实例密码】

服务实例名称:test(可自定义命名)

地域:华东2(上海)

实例密码:Sjtu@520

说明输入实例密码时请注意大小写,请记住您设置的实例名称及对应密码,后续实验过程会用到。

完成填写后点击【下一步:确认订单】

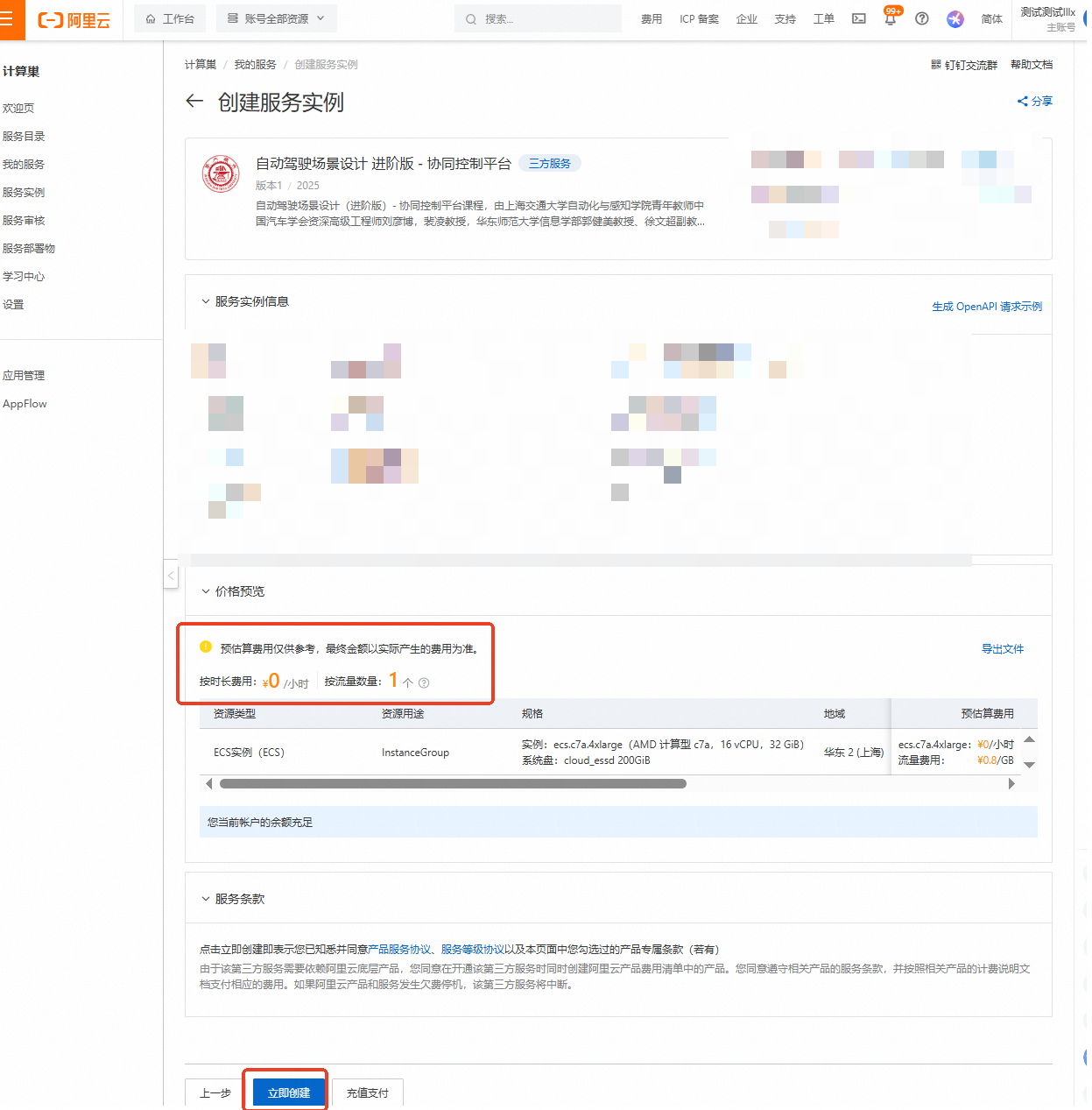

核对实例信息及价格预览,无误请点击【立即创建】

重要

重要领取300元优惠券后,资源应为0元/小时,且会提示【您当前账户的余额充足】!若提示余额不足等,请检查是否正确领取优惠券

创建成功,点击【去列表查看】

查看实例,并等待部署

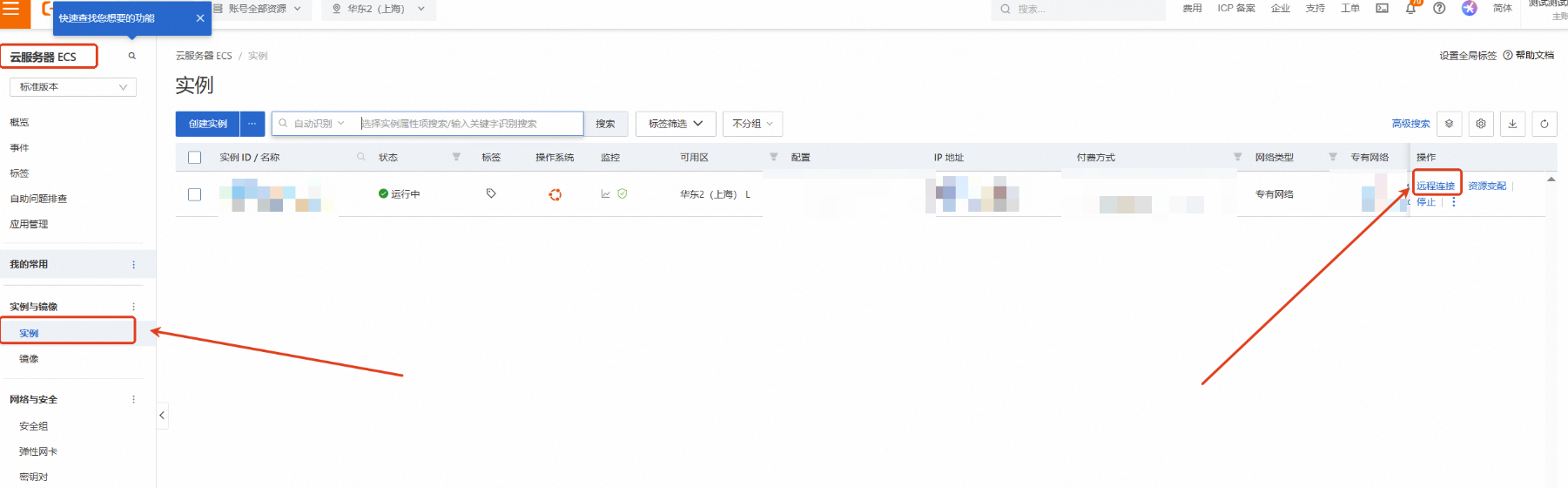

点击左侧的图标展开目录,选择目录中的【云服务器ECS】

云服务器ECS—实例—远程连接

下拉展开更多登录方式,选择【通过VNC远程连接】

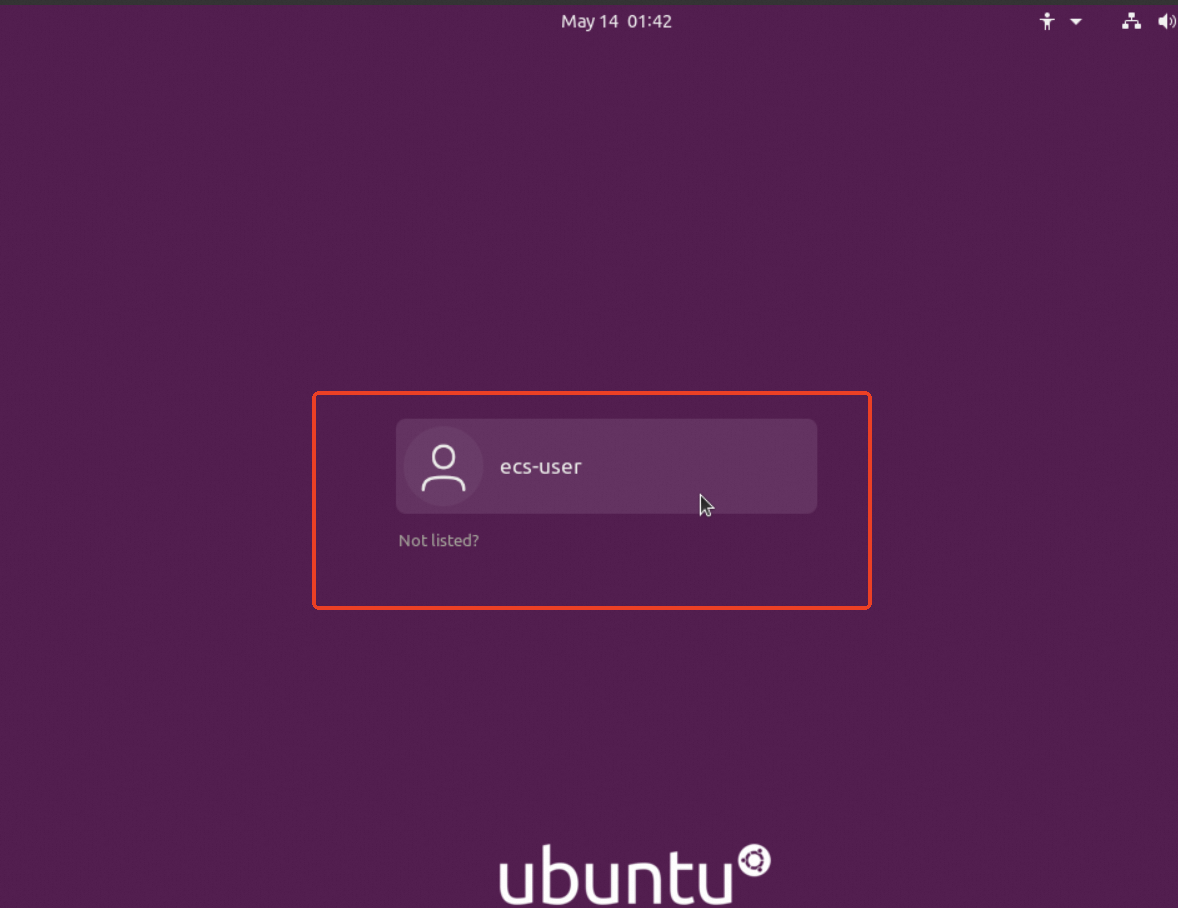

点击ecs-user,并输入之前设置的实例密码:Sjtu@520,回车

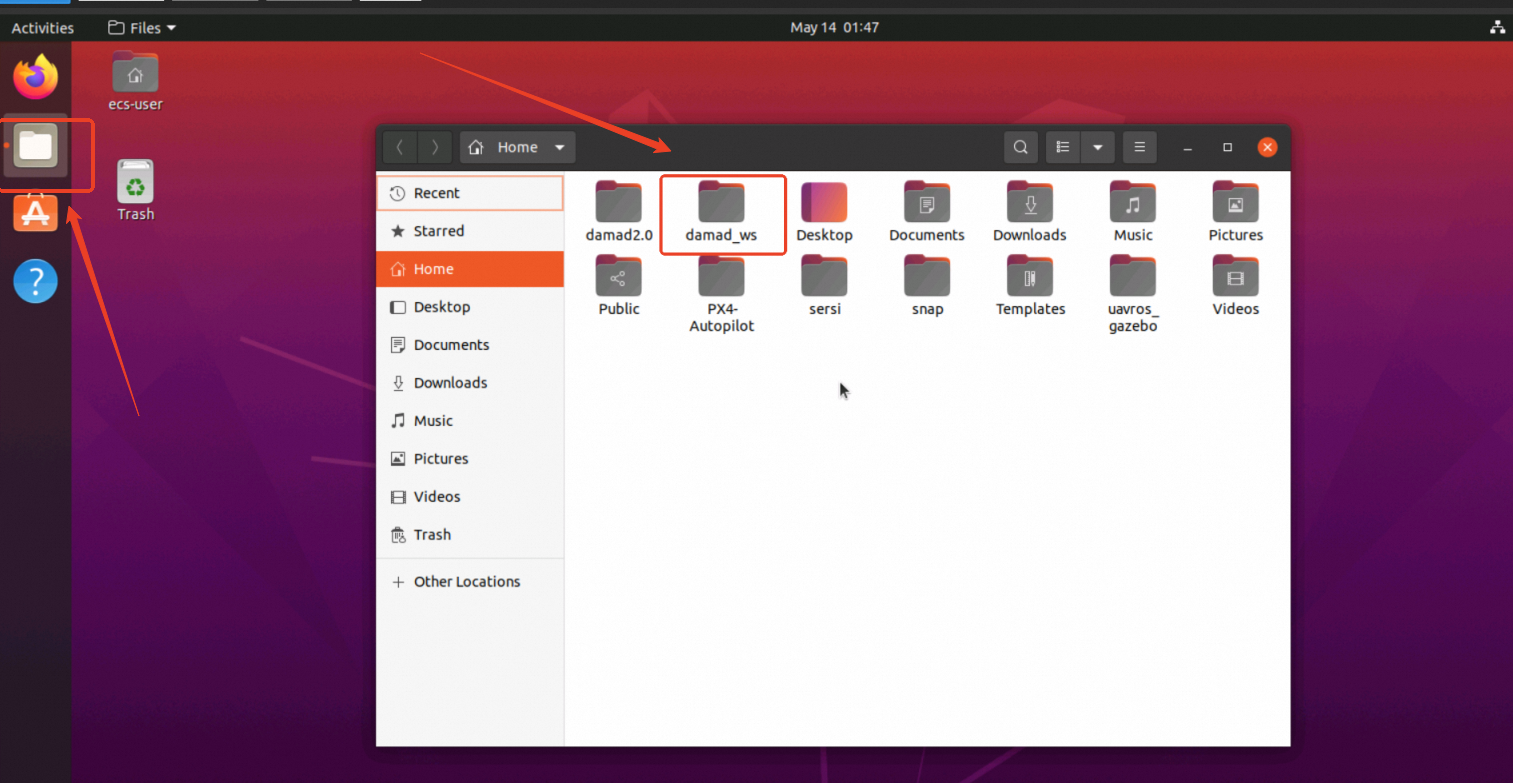

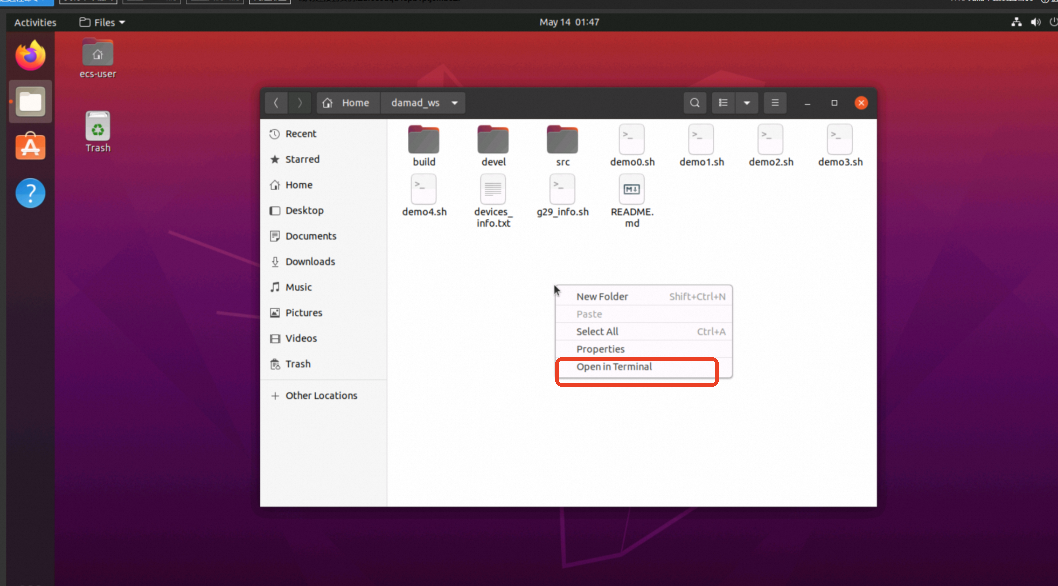

进入镜像后,点击左侧第2个图标,打开文件夹后点击damad_ws文件夹

进入damad_ws文件夹,在空白处点击鼠标右键,选择Open in Terminal

2、代码部分

本实验程序执行需要调用~/damad_ws/demo1.sh

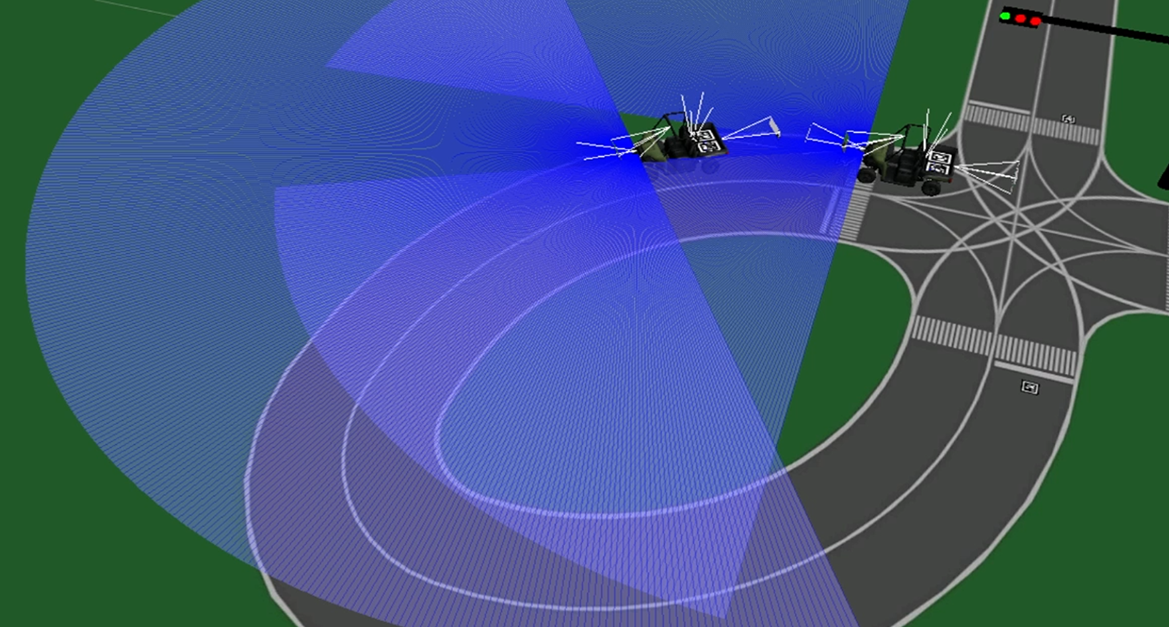

`仿真环境部分` # 生成带有ugv0和ugv1的仿真环境 gnome-terminal -t "gazebo_ugv" -- bash -c 'roslaunch px4 ugv1.launch' sleep 15 # 先生成ugv再生成uav,防止uav掉到ugv下 # 生成带有uav0和uav1的仿真环境,每辆ugv上一架uav gnome-terminal -t "gazebo_uav" -- bash -c 'roslaunch px4 uav1.launch' sleep 45 `红绿灯部分` # 开启红绿灯 gnome-terminal -t "traffic_light" -- bash -c 'source devel/setup.bash;rosrun control_pkg traffic_light.py' `uav部分` # uav0起飞,稳定在某一高度下并识别跟随ugv0上的左边二维码 gnome-terminal -t "iris0_follow" -- bash -c 'source devel/setup.bash;rosrun control_pkg iris0_follow_qrcode.py;bash' sleep 20 # uav1起飞,稳定在某一高度下并识别跟随ugv1上的左边二维码,iris1_callback回调函数里tag.tag_id == 16(ugv1左边二维码) gnome-terminal -t "iris1_follow" -- bash -c 'source devel/setup.bash;rosrun control_pkg iris1_follow_qrcode.py;bash' sleep 60 `ugv部分` gnome-terminal -t "rover0_servo_commands" -- bash -c 'source devel/setup.bash;rosrun control_pkg rover0_servo_commands.py' gnome-terminal -t "rover1_servo_commands" -- bash -c 'source devel/setup.bash;rosrun control_pkg rover1_servo_commands.py' # ugv1准备跟车 gnome-terminal -t "follow" -- bash -c 'source devel/setup.bash;rosrun control_pkg follow.py;bash' # ugv0巡线 gnome-terminal -t "rover0_lane" -- bash -c 'source devel/setup.bash;rosrun control_pkg lane.py;bash'首先通过运行~/PX4-Autopilot/launch中的ugv1.launch文件加载gazebo场景并生成车辆,随后通过运行~/PX4-Autopilot/launch中的uav1.launch文件生成无人机。

场景生成完毕后,运行~/damad_ws/src/control_pkg/src中的traffic_light.py,开启场景中红绿灯模型的变化以及ROS红绿灯信号的发布。

#! /usr/bin/env python3 import rospy from gazebo_msgs.msg import LinkState from gazebo_msgs.srv import SetLinkState, GetLinkState from std_msgs.msg import String, Header import time #改模型红绿灯位置 #切换信号时先把之前信号模型调换复原再修改新的信号灯 def traffic_light_change(x, y, signal): #红绿灯太多影响程序运行,暂时只留cantilevered_light_0_0 if x > 0 or y > 0: return # 根据当前信号选择需要修改的link名 if signal == 0: link_name0 = 'cantilevered_light_%d_%d::traffic_light::left_off'%(x, y) link_name1 = 'cantilevered_light_%d_%d::traffic_light::left_on'%(x, y) elif signal == 1: link_name0 = 'cantilevered_light_%d_%d::traffic_light::middle_off'%(x, y) link_name1 = 'cantilevered_light_%d_%d::traffic_light::middle_on'%(x, y) elif signal == 2: link_name0 = 'cantilevered_light_%d_%d::traffic_light::right_off'%(x, y) link_name1 = 'cantilevered_light_%d_%d::traffic_light::right_on'%(x, y) # 获取左/中/右的红绿灯模型link state current_state0 = get_link_state_proxy(link_name0, reference_frame) link_state0 = LinkState() link_state0.link_name = link_name0 current_state1 = get_link_state_proxy(link_name1, reference_frame) link_state1 = LinkState() link_state1.link_name = link_name1 # 调换左/中/右的红绿灯模型 link_state0.pose = current_state1.link_state.pose link_state0.twist = current_state0.link_state.twist link_state0.reference_frame = reference_frame set_link_state_proxy(link_state0) link_state1.pose = current_state0.link_state.pose link_state1.twist = current_state1.link_state.twist link_state1.reference_frame = reference_frame set_link_state_proxy(link_state1) #定时切换左中右信号 def traffic_light_signal(): global currentSignal, lastTime, signals if time.time() - lastTime >= changeRate: for i in range(len(signals)): for j in range(len(signals[0])): traffic_light_change(i, j, signals[i][j]) currentSignal = (currentSignal + 1) % 3 for i in range(len(signals)): for j in range(len(signals[0])): traffic_light_change(i, j, currentSignal) signals[i][j] = currentSignal lastTime = time.time() pub.publish(str(signals)) rospy.loginfo("Current Traffic Light is " + str(signals)) if __name__ == '__main__': # 保存每个信号灯目前信号 signals = [[0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0], [0, 0, 0, 0]] changeRate = 5 #5秒变信号 currentSignal = 0 #0左 1中 2右 lastTime = time.time() rospy.init_node("traffic_light") pub = rospy.Publisher("traffic_light", String,queue_size=1) # 等待并调用gazebo中get_link_state和set_link_state服务 rospy.wait_for_service('/gazebo/get_link_state') rospy.wait_for_service('/gazebo/set_link_state') get_link_state_proxy= rospy.ServiceProxy('/gazebo/get_link_state', GetLinkState) set_link_state_proxy = rospy.ServiceProxy('/gazebo/set_link_state', SetLinkState) reference_frame = 'world' rate = rospy.Rate(10) while not rospy.is_shutdown(): traffic_light_signal() rate.sleep()将所有红绿灯的信号作为一个二元数组,经过一定的时间间隔,将数组通过字符串形式发布,随后通过获取模型的link_state,将每个红绿灯模型中红绿灯link的位置交换实现红绿灯的切换。

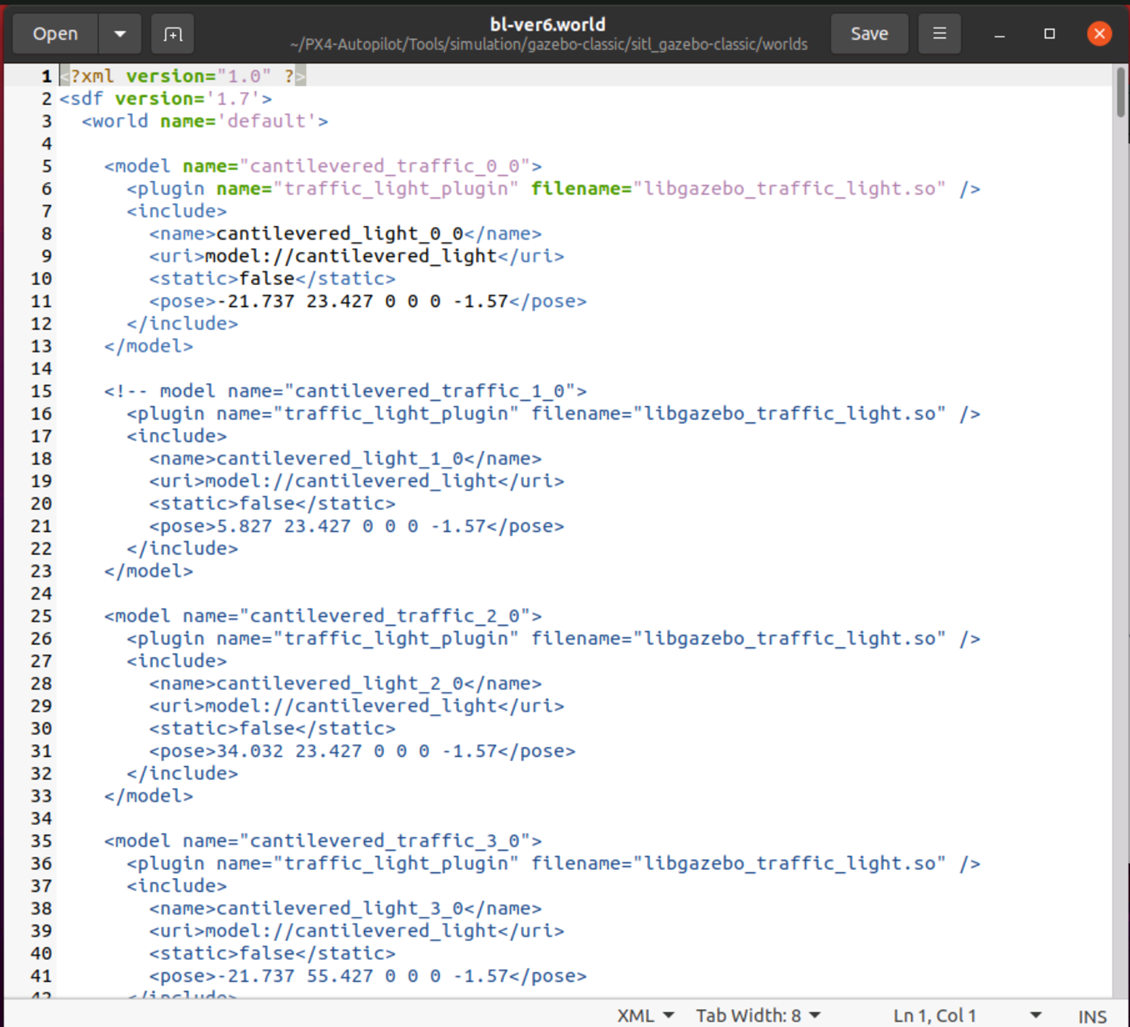

由于红绿灯过多会消耗大量资源,所以目前只生成一个红绿灯,并且traffic_light.py代码中只修改坐标为(0, 0)的红绿灯模型。可以根据需要在~/PX4-Autopilot/Tools/simulation/gazebo-classic/sitl_gazebo-classic/worlds/bl-ver6.world文件中增减红绿灯个数。

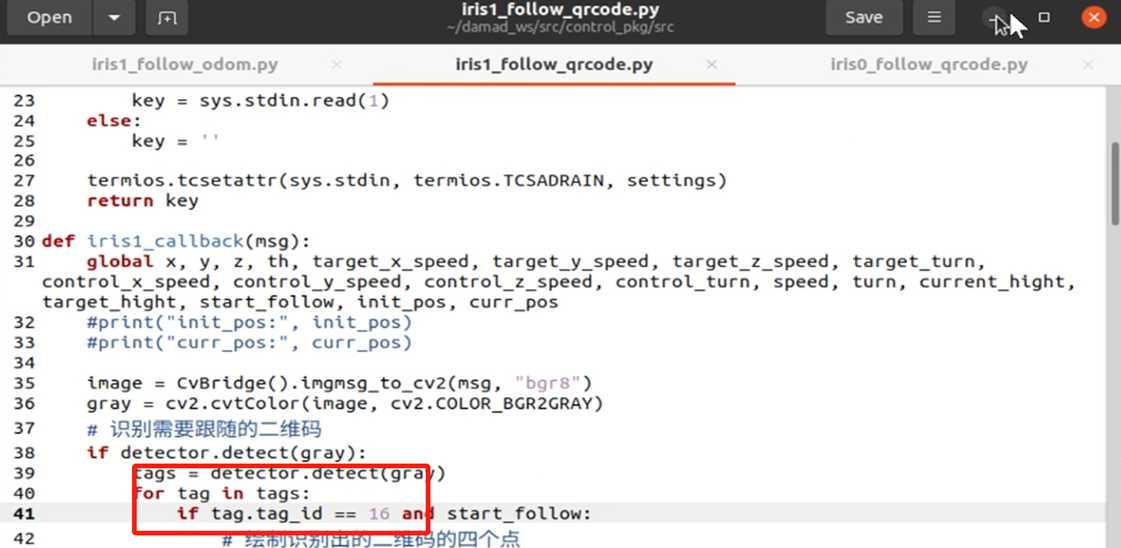

开启红绿灯后,uav0和uav1陆续起飞,稳定在某一高度下并识别跟随二维码,其中~/damad_ws/src/control_pkg/src/iris1_follow_qrcode.py中的iris1_callback回调函数里需要修改为tag.tag_id == 16(ugv1左边二维码)。

以~/damad_ws/src/control_pkg/src/iris0_follow_qrcode.py为例:

#!/usr/bin/env python3 #二维码设置路径 uavros_gazebo/models/landing_pad_rover import rospy from sensor_msgs.msg import Image from cv_bridge import CvBridge import cv2 from geometry_msgs.msg import Twist from geometry_msgs.msg import PoseStamped from mavros_msgs.srv import * from mavros_msgs.msg import State import math import sys, select, termios, tty import pyapriltags import time #获取键值函数 def getKey(): tty.setraw(sys.stdin.fileno()) rlist, _, _ = select.select([sys.stdin], [], [], 0.1) if rlist: key = sys.stdin.read(1) else: key = '' termios.tcsetattr(sys.stdin, termios.TCSADRAIN, settings) return key def iris0_callback(msg): global x, y, z, th, target_x_speed, target_y_speed, target_z_speed, target_turn, control_x_speed, control_y_speed, control_z_speed, control_turn, speed, turn, current_hight, target_hight, start_follow, init_pos, curr_pos image = CvBridge().imgmsg_to_cv2(msg, "bgr8") gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # 识别需要跟随的二维码 if detector.detect(gray): tags = detector.detect(gray) for tag in tags: if tag.tag_id == 18 and start_follow: # 绘制识别出的二维码的四个点 cv2.circle(image, tuple(tag.corners[0].astype(int)), 4, (255, 0, 0), 2) # left-top cv2.circle(image, tuple(tag.corners[1].astype(int)), 4, (255, 0, 0), 2) # right-top cv2.circle(image, tuple(tag.corners[2].astype(int)), 4, (255, 0, 0), 2) # right-bottom cv2.circle(image, tuple(tag.corners[3].astype(int)), 4, (255, 0, 0), 2) # left-bottom k = (tag.corners[0].astype(int)[1] - tag.corners[3].astype(int)[1]) / (tag.corners[0].astype(int)[0] - tag.corners[3].astype(int)[0]) # 计算二维码中心点 tag_x = int((tag.corners[1].astype(int)[0] + tag.corners[0].astype(int)[0]) / 2) tag_y = int((tag.corners[1].astype(int)[1] + tag.corners[2].astype(int)[1]) / 2) # 计算图像中心点 image_x = int(image.shape[0] / 2) image_y = int(image.shape[1] / 2) #print("二维码中心点坐标为:", tag_x, tag_y) #print("图像中心点坐标为:", image_x, image_y) # 根据二维码中心点和图像中心点的xy坐标差距设置相应的飞行速度 x = (image_y - tag_y) * 0.019 y = (image_x - tag_x) * 0.019 # 升高到一定高度时开始识别二维码 if (target_hight - current_hight) < 2: start_follow = True # 无人机保持指定高度飞行 if (current_hight - target_hight) > 0.2: #print("下降") z = -0.5 elif (current_hight - target_hight) < -0.2: #print("升高") z = 0.5 else: z = 0 if not start_follow: x = -init_pos.x + curr_pos.x y = -init_pos.y + curr_pos.y th = 0 #根据速度与方向计算目标速度 target_x_speed = speed * x target_y_speed = speed * y target_z_speed = speed * z target_turn = turn * th #x方向平滑控制,计算前进后退实际控制速度 if target_x_speed > control_x_speed: control_x_speed = min( target_x_speed, control_x_speed + 0.1 ) elif target_x_speed < control_x_speed: control_x_speed = max( target_x_speed, control_x_speed - 0.1 ) else: control_x_speed = target_x_speed #y方向平滑控制,计算前进后退实际控制速度 if target_y_speed > control_y_speed: control_y_speed = min( target_y_speed, control_y_speed + 0.1 ) elif target_y_speed < control_y_speed: control_y_speed = max( target_y_speed, control_y_speed - 0.1 ) else: control_y_speed = target_y_speed #z方向平滑控制,实际控制速度 if target_z_speed > control_z_speed: control_z_speed = min( target_z_speed, control_z_speed + 0.1 ) elif target_z_speed < control_z_speed: control_z_speed = max( target_z_speed, control_z_speed - 0.1 ) else: control_z_speed = target_z_speed #平滑控制,计算转向实际控制速度 if target_turn > control_turn: control_turn = min( target_turn, control_turn + 0.5 ) elif target_turn < control_turn: control_turn = max( target_turn, control_turn - 0.5 ) else: control_turn = target_turn # 计算出y方向的sin值 y_sin = math.sin(sita/180*math.pi) # 如果小于0,则改为正数 if y_sin < 0: y_sin = -y_sin # 乘以y分量的正负(通过四元数z*w获得,z*w>0,y分量在x轴上方) y_sin = y_sin * zf twist = Twist() #创建ROS速度话题变量 #正反朝向前进时 if x != 0 and y == 0: twist.linear.x = control_x_speed * math.cos(sita/180*math.pi) twist.linear.y = control_x_speed * y_sin # 朝向速度乘以y轴sin值 #左右平移时 if y != 0 and x == 0: twist.linear.x = control_y_speed * -y_sin twist.linear.y = control_y_speed * math.cos(sita/180*math.pi) #斜向移动? if x != 0 and y != 0: twist.linear.x = control_x_speed * math.cos(sita/180*math.pi) twist.linear.y = control_y_speed * math.cos(sita/180*math.pi) twist.linear.z = control_z_speed twist.angular.x = 0 twist.angular.y = 0 twist.angular.z = control_turn pub.publish(twist) #ROS发布速度话题 cv2.imshow("iris0_image", image) cv2.waitKey(1) # 回调函数:订阅无人机位姿 def pose_cb(m): global sita global z global w global zf global current_hight global init_pos, curr_pos if init_pos is None: init_pos = m.pose.position curr_pos = m.pose.position current_hight = m.pose.position.z z = m.pose.orientation.z w = m.pose.orientation.w # 计算朝向在x轴的上方还是下方 if z*w > 0: zf = 1 else: zf = -1 sita = 2*math.acos(w)*180/math.pi # rospy.loginfo('%.2f\r',sita) # 回调函数:订阅mavros状态 def state_cb(state): global current_state current_state = state def takeoff(): global current_state x = -1 y = 0 while(1): key = getKey() #获取键值,去除后无法起飞? if x == 0: # 开启offboard模式 setModeServer(custom_mode='OFFBOARD') print("UAV0 Offboard enabled") x += 1 elif x == 1: # 解锁,准备起飞 armServer(True) print("UAV0 Vehicle armed") x += 1 time.sleep(2) elif x == 2: # 起飞 print("UAV0 Vehicle Takeoff") setModeServer(custom_mode='AUTO.TAKEOFF') x += 1 time.sleep(5) elif x == 3: # 获得控制权 setModeServer(custom_mode='OFFBOARD') x += 1 print("UAV0 Offboard enabled") elif x == 4: break twist = Twist() #创建ROS速度话题变量 pub.publish(twist) #ROS发布速度话题,去除后无法起飞? y += 1 if y == 10: x = 0 #主函数 if __name__=="__main__": settings = termios.tcgetattr(sys.stdin) #获取键值初始化,读取终端相关属性 detector = pyapriltags.Detector() #二维码识别器 rospy.init_node('uav0_follow') #创建ROS节点 pub = rospy.Publisher('uav0/mavros/setpoint_velocity/cmd_vel_unstamped', Twist, queue_size=5) #创建速度话题发布者 init_pos = None curr_pos = None # 订阅无人机位姿 current_hight = 0 # 当前高度 target_hight = 6.5 #目标高度 start_follow = False #第一次达到目标高度后开始识别二维码跟踪 x = 0 #前进后退方向 y = 0 #左右移动方向 z = 0 #上下移动方向 th = 0 #转向/横向移动方向 target_x_speed = 0 #前进后退目标速度 target_y_speed = 0 #左右平移目标速度 target_z_speed = 0 #上下运动目标速度 target_turn = 0 #转向目标速度 control_x_speed = 0 #前进后退实际控制速度 control_y_speed = 0 #左右平移实际控制速度 control_z_speed = 0 #上下运动实际控制速度 control_turn = 0 #转向实际控制速度 speed = 1 #默认移动速度 m/s turn = 1 #默认转向速度 rad/s sita = 0.0 # 朝向 z = 0 w = 0 zf = 1 # 订阅uav0位姿信息 rospy.Subscriber('uav0/mavros/local_position/pose',PoseStamped, pose_cb) # 订阅mavros状态 current_state = State() rospy.Subscriber('uav0/mavros/state',State,state_cb) # 定义起飞降落服务客户端(起飞,降落) setModeServer = rospy.ServiceProxy('uav0/mavros/set_mode',SetMode) armServer = rospy.ServiceProxy('uav0/mavros/cmd/arming', CommandBool) # uav0起飞 takeoff() # 订阅uav0上的摄像头图像信息 iris0_sub = rospy.Subscriber("/iris0/fpv_cam/image_raw", Image, iris0_callback) rospy.spin() #程序结束前设置终端相关属性 termios.tcsetattr(sys.stdin, termios.TCSADRAIN, settings)在生成uav0并连接成功后,通过uav0/mavros/set_mode和uav0/mavros/cmd/arming服务,顺序执行解锁、起飞和获得控制权操作。

同时为了保持起飞阶段的平稳,订阅自身位姿信息,并根据初始位姿的xy坐标调整自身坐标。在升高到指定高度后保持该高度。

根据订阅/iris0/fpv_cam/image_raw消息获得的图像数据进行二维码的识别,根据二维码定位坐标的中心坐标与图像的中心坐标的偏移来设置对应轴的速度,随后将转换后的Twist类型消息发布以控制uav0始终跟随二维码移动。

在无人机起飞并保持对二维码跟随后,分别开启车辆底盘和后车跟车。后车跟车调用的是~/damad_ws/src/control_pkg/src/follow.py程序。

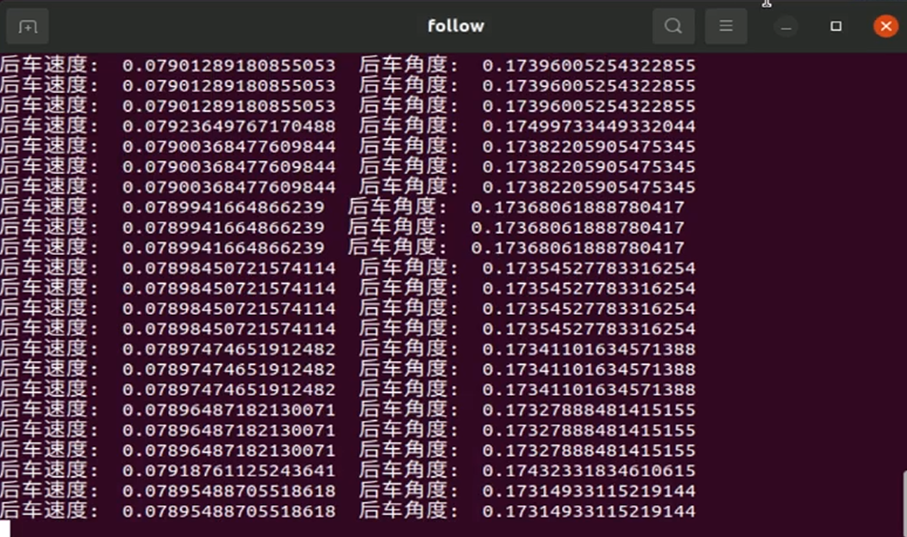

#! /usr/bin/env python3 import rospy from nav_msgs.msg import Odometry from ackermann_msgs.msg import AckermannDriveStamped import tf import math def pose_callback0(msg): global pose_flag0, ugv0_msg pose_flag0 = True ugv0_msg.child_frame_id = msg.child_frame_id ugv0_msg.header = msg.header ugv0_msg.pose = msg.pose ugv0_msg.twist = msg.twist def pose_callback1(msg): global pose_flag1, ugv1_msg pose_flag1 = True ugv1_msg.child_frame_id = msg.child_frame_id ugv1_msg.header = msg.header ugv1_msg.pose = msg.pose ugv1_msg.twist = msg.twist def follow(): global PI, pose_flag0, pose_flag1, initial_distance, thresh_distance, target_point, ugv0_msg, ugv1_msg, leader_msg_queue, msg, pub if not pose_flag0 or not pose_flag1: #接收到odometry消息后才开始运行 return leader_msg_queue.append(ugv0_msg) #求目标点当前距离 target_distance = math.sqrt((target_point.pose.pose.position.x - ugv1_msg.pose.pose.position.x) ** 2 + (target_point.pose.pose.position.y - ugv1_msg.pose.pose.position.y) ** 2) #求前后车当前距离 follower_distance = math.sqrt((target_point.pose.pose.position.x - ugv1_msg.pose.pose.position.x) ** 2 + (target_point.pose.pose.position.y - ugv1_msg.pose.pose.position.y) ** 2) #print(target_distance, follower_distance, initial_distance) #前后车当前距离小于初始距离时停车 if follower_distance < initial_distance: #print("距离太近自动停车") msg.drive.speed = 0; msg.drive.steering_angle = 0; msg.header.stamp = rospy.Time.now() pub.publish(msg); else: #target_point为初始值时直接赋值 if target_point.pose.pose.position.x == 0 and target_point.pose.pose.position.y == 0: target_point = ugv0_msg #求前后车初始距离 initial_distance = math.sqrt((ugv0_msg.pose.pose.position.x - ugv1_msg.pose.pose.position.x) ** 2 + (ugv0_msg.pose.pose.position.y - ugv1_msg.pose.pose.position.y) ** 2) print("两车初始距离: ", initial_distance, "\n前车坐标:", ugv0_msg.pose.pose.position, "\n后车坐标:", ugv1_msg.pose.pose.position) #后车接近目标点时按队列方式从数组中取出下一个目标点 if target_distance < thresh_distance: target_point = leader_msg_queue[0] leader_msg_queue = leader_msg_queue[1:] #后车与目标点差距过大时清除并重开队列 elif target_distance > 5: leader_msg_queue = [] target_point = ugv0_msg #四元数转欧拉角 (roll, pitch, yaw) = tf.transformations.euler_from_quaternion([ugv1_msg.pose.pose.orientation.x, ugv1_msg.pose.pose.orientation.y, ugv1_msg.pose.pose.orientation.z, ugv1_msg.pose.pose.orientation.w]) gamma = yaw delta = math.atan2(target_point.pose.pose.position.y-ugv1_msg.pose.pose.position.y,target_point.pose.pose.position.x-ugv1_msg.pose.pose.position.x); if gamma < 0: gamma += (2 * PI) theta = delta - gamma if theta > PI: theta -= (2 * PI) elif theta < -PI: theta += (2 * PI) r = math.sqrt((ugv0_msg.pose.pose.position.x - ugv1_msg.pose.pose.position.x) ** 2 + (ugv0_msg.pose.pose.position.y - ugv1_msg.pose.pose.position.y) ** 2) k = 1.0 if r > 0.4: msg.drive.speed = 0.01 * r #修改数值调节跟车时后车速度 else: msg.drive.speed = 0.01 msg.drive.steering_angle = k * theta print("后车速度: ", msg.drive.speed, " 后车角度: ", msg.drive.steering_angle) msg.header.stamp = rospy.Time.now() pub.publish(msg); if __name__ == '__main__': PI = 3.1415926535 pose_flag0 = False pose_flag1 = False initial_distance = 0 #前后车初始距离 thresh_distance = 0.2 #后车和目标点距离差 target_point = Odometry() #目标点 ugv0_msg = Odometry() #前车里程计消息 ugv1_msg = Odometry() #后车里程计消息 leader_msg_queue = [] #新建Ackermann消息 msg = AckermannDriveStamped() msg.header.frame_id = "base_link1" msg.drive.acceleration = 1 msg.drive.jerk = 1 msg.drive.steering_angle_velocity = 1 rospy.init_node("follow") pub = rospy.Publisher("ugv1/ackermann_cmd_mux/output", AckermannDriveStamped,queue_size=1) # 订阅前车ugv0里程计消息 rospy.Subscriber("/ugv0/odom", Odometry, pose_callback0) # 订阅后车ugv1里程计消息 rospy.Subscriber("/ugv1/odom", Odometry, pose_callback1) rate = rospy.Rate(100) while not rospy.is_shutdown(): follow() rate.sleep()首先订阅前后车的odom消息,当接收到订阅的消息后,计算出当前两车的初始距离作为安全距离。同时将前车odom数据加入队列作为目标值,利用队列中首位odom数据与后车当前odom数据进行计算,设置后车的AckermannDriveStamped类型消息中的速度和转角并发布。当前后车距离差距过大时清空消息队列,重新开始跟车。

当跟车程序启动完毕后,开启前车巡线行驶。巡线行驶调用的是~/damad_ws/src/control_pkg/src/lane.py程序。

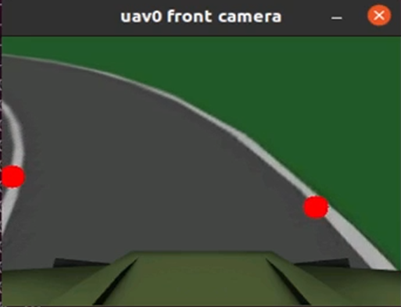

#! /usr/bin/env python3 import rospy, cv2, cv_bridge, numpy from sensor_msgs.msg import Image from sensor_msgs.msg import CompressedImage from ackermann_msgs.msg import AckermannDriveStamped from std_msgs.msg import String import pyapriltags import copy def set_roi_forward(h, w, mask, position): if position == "left": mask[0:int(h / 3), 0:w] = 0 mask[0:h, int(w / 4):w] = 0 if position == "right": mask[0:int(h / 3), 0:w] = 0 mask[0:h, 0:int(w / 4)] = 0 return mask def straight(image): print("直行通过十字路口") global pub, msg msg.drive.speed = 0.2 msg.drive.steering_angle = 0 msg.header.stamp = rospy.Time.now() pub.publish(msg) cv2.imshow("uav0 front camera", image) cv2.waitKey(1) def turn_left(image): print("左转通过十字路口") global pub, msg msg.drive.speed = 0.1 msg.drive.steering_angle = 0.18 msg.header.stamp = rospy.Time.now() pub.publish(msg) cv2.imshow("uav0 front camera", image) cv2.waitKey(1) def turn_right(image): print("右转通过十字路口") global pub, msg msg.drive.speed = 0.1 msg.drive.steering_angle = -0.28 msg.header.stamp = rospy.Time.now() pub.publish(msg) cv2.imshow("uav0 front camera", image) cv2.waitKey(1) def lane(image): global into_crossroads, signal, msg, pub hsv = cv2.cvtColor(image, cv2.COLOR_BGR2HSV) h, w, _ = image.shape #cv2.imshow("hsv", hsv) lower_white = numpy.array([0, 0, 100]) upper_white = numpy.array([0, 0, 255]) mask = cv2.inRange(hsv, lower_white, upper_white) #cv2.imshow("mask", mask) roi_left = set_roi_forward(h, w, copy.deepcopy(mask), "left") #cv2.imshow("roi_left", roi_left) roi_right = set_roi_forward(h, w, copy.deepcopy(mask), "right") #cv2.imshow("roi_right", roi_right) #左线质心 M_left = cv2.moments(roi_left) cx_left = 0 cy_left = h-1 if M_left['m00'] > 0: cx_left = int(M_left['m10'] / M_left['m00']) #质心x坐标 cy_left = int(M_left['m01'] / M_left['m00']) #质心y坐标 cv2.circle(image, (cx_left, cy_left), 10, (0, 0, 255), -1) #右线质心 M_right = cv2.moments(roi_right) cx_right = w-1 cy_right = h-1 if M_right['m00'] > 0: cx_right = int(M_right['m10'] / M_right['m00']) #质心x坐标 cy_right = int(M_right['m01'] / M_right['m00']) #质心y坐标 cv2.circle(image, (cx_right, cy_right), 10, (0, 0, 255), -1) msg.drive.speed = 0.08 msg.drive.steering_angle = -float((cx_left + cx_right - w + 50) / 100) msg.header.stamp = rospy.Time.now() pub.publish(msg) print(msg.drive.speed, msg.drive.steering_angle) cv2.imshow("uav0 front camera", image) cv2.waitKey(1) def detect_tag(image): global into_crossroads, signal, detector gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) if detector.detect(gray): tags = detector.detect(gray) for tag in tags: cv2.circle(image, tuple(tag.corners[0].astype(int)), 4, (255, 0, 0), 2) # left-top cv2.circle(image, tuple(tag.corners[1].astype(int)), 4, (255, 0, 0), 2) # right-top cv2.circle(image, tuple(tag.corners[2].astype(int)), 4, (255, 0, 0), 2) # right-bottom cv2.circle(image, tuple(tag.corners[3].astype(int)), 4, (255, 0, 0), 2) # left-bottom print("tag id: ", tag.tag_id) if tag.tag_id == 0: into_crossroads = True print("检测到进入十字路口二维码") return if tag.tag_id == 1: into_crossroads = False print("检测到进入车道二维码") return def image_callback(msg): bridge = cv_bridge.CvBridge() frame = bridge.imgmsg_to_cv2(msg, 'bgr8') lane(frame) pass def traffic_light_callback(msg): global signal signal = msg.data if __name__ == '__main__': rospy.init_node("ugv0") #新建Ackermann消息 msg = AckermannDriveStamped() msg.header.frame_id = "base_link0" msg.drive.acceleration = 1 msg.drive.jerk = 1 msg.drive.steering_angle_velocity = 1 detector = pyapriltags.Detector() #二维码识别器 into_crossroads = False #进入十字路口 signal = "" #交通灯信号 pub = rospy.Publisher("ugv0/ackermann_cmd_mux/output", AckermannDriveStamped,queue_size=1) rospy.Subscriber("/rover0/fpv_cam3/image_raw", Image, image_callback) rospy.Subscriber("traffic_light", String, traffic_light_callback) rospy.spin() passugv0通过订阅车前方相机发布的/rover0/fpv_cam3/image_raw消息获取图像数据。接收到图像数据后,识别车道两旁的白色车道线,根据两侧车道线的质心坐标与中心坐标的偏移设置转角数据,经过转换并设置AckermannDriveStamped消息的msg.drive.speed, msg.drive.steering_angle数据后发布。

3、实验操作

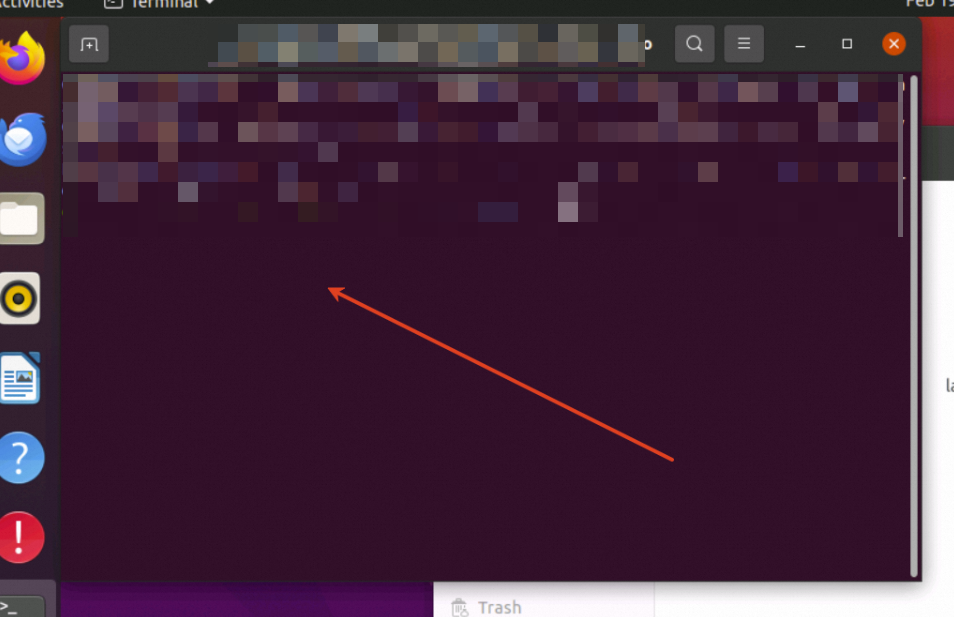

在~/damad_ws文件夹中新建终端,输出以下命令开启程序。

./demo1.sh

清理资源

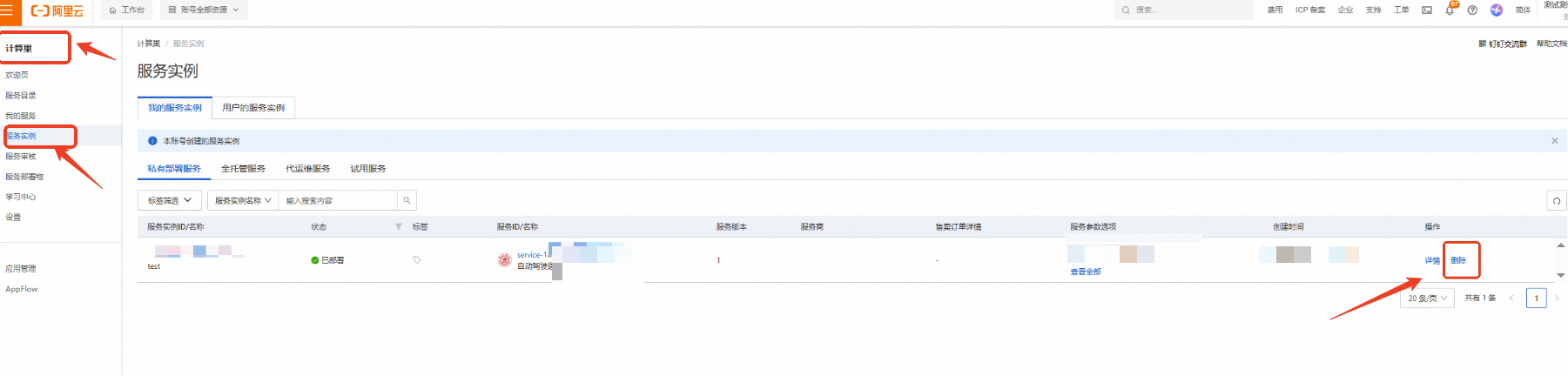

计算巢—服务实例—复制服务实例ID,点击【删除】

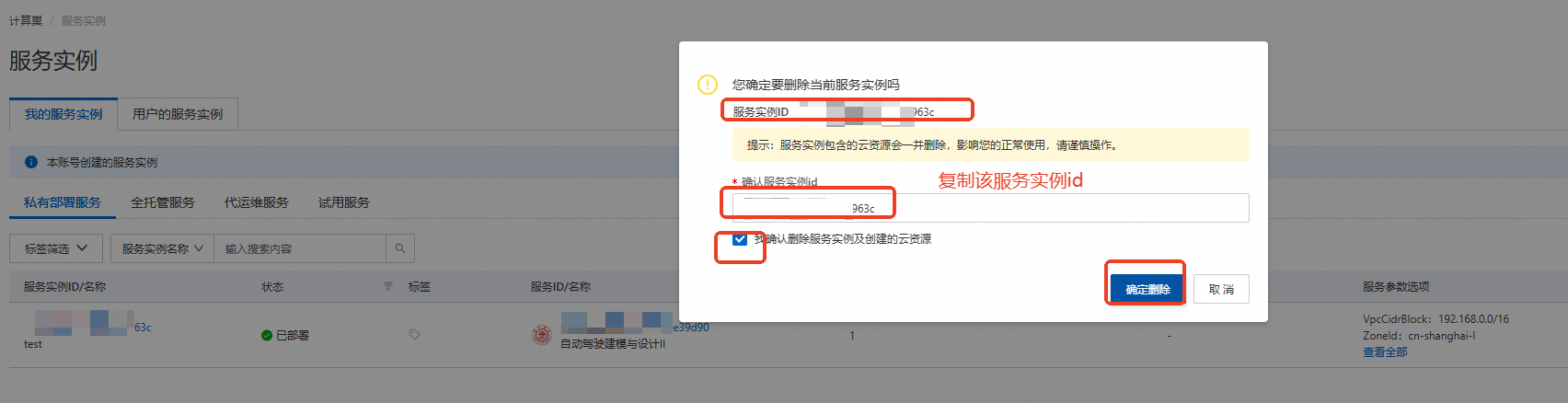

在弹窗粘贴服务实例ID,并进行勾选,点击【确定删除】

完成安全验证后,即可成功释放实例。

回到云服务器ECS——实例,检查是否成功释放资源

关闭实验

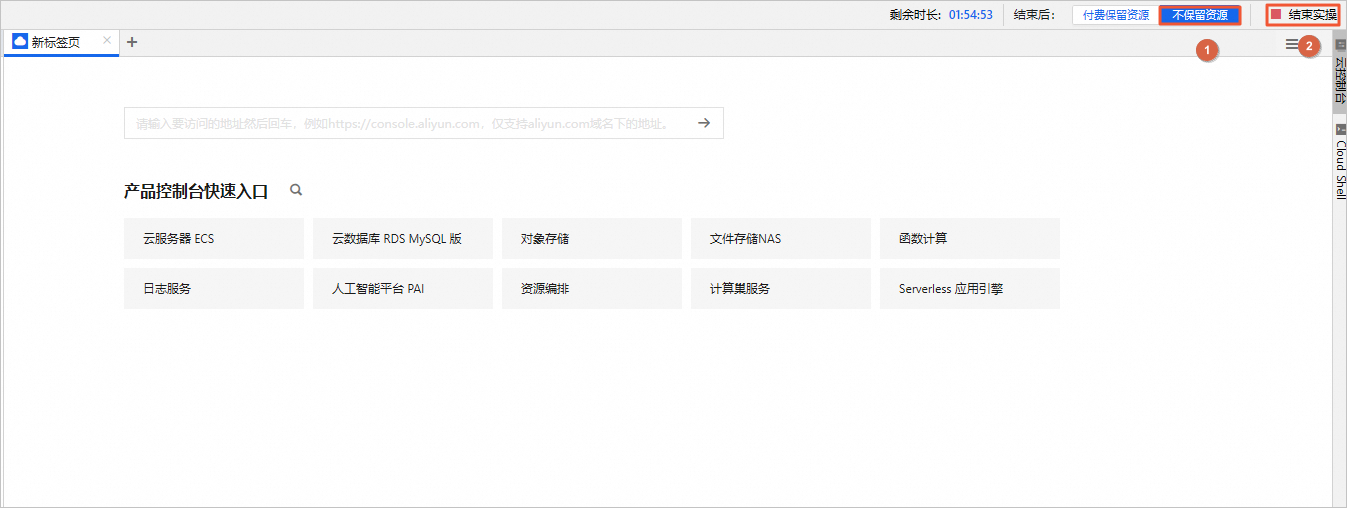

在完成实验后,如果无需继续使用资源,选择不保留资源,单击结束实操。在结束实操对话框中,单击确定。

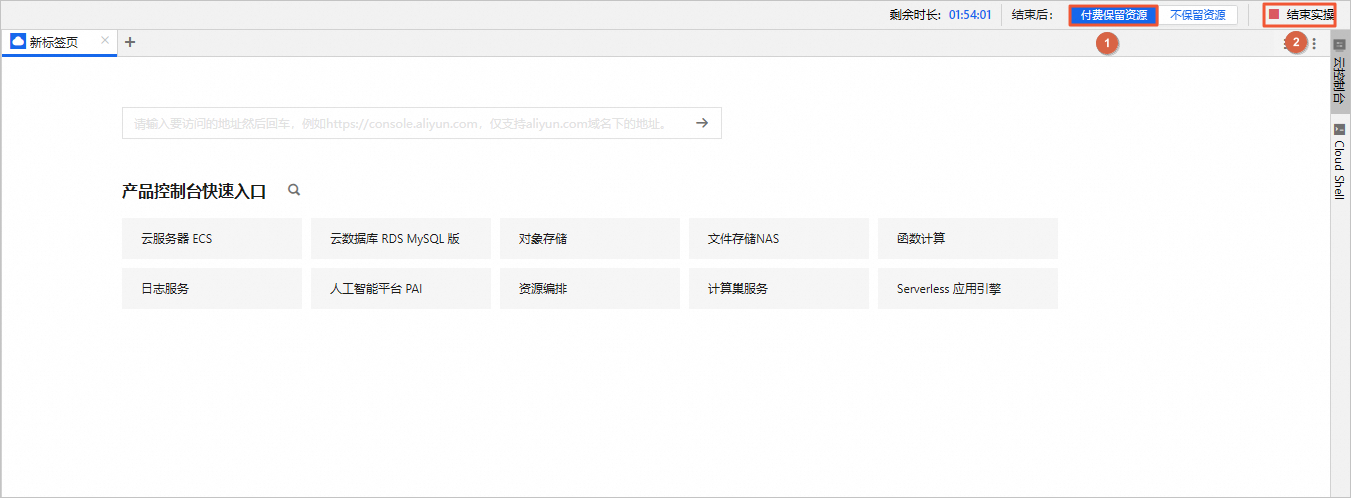

在完成实验后,如果需要继续使用资源,选择付费保留资源,单击结束实操。在结束实操对话框中,单击确定。请随时关注账户扣费情况,避免发生欠费。