视觉理解模型可以根据您传入的图片或视频进行回答,支持单图或多图的输入,适用于图像描述、视觉问答、物体定位等多种任务。

在线体验:访问阿里云百炼控制台,在页面右上角选择目标地域,进入视觉模型页面进行体验。

快速开始

前提条件

如果通过 SDK 进行调用,需安装SDK,其中 DashScope Python SDK 版本不低于1.24.6,DashScope Java SDK 版本不低于 2.21.10。

以下示例演示了如何调用模型描述图像内容。关于本地文件和图像限制的说明,请参见如何传入本地文件、图像限制章节。

OpenAI兼容

Python

import os

from openai import OpenAI

client = OpenAI(

# 若没有配置环境变量,请用阿里云百炼API Key将下行替换为:api_key="sk-xxx",

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

},

},

{"type": "text", "text": "图中描绘的是什么景象?"},

],

},

],

)

print(completion.choices[0].message.content)返回结果

这是一张在海滩上拍摄的照片。照片中,一个人和一只狗坐在沙滩上,背景是大海和天空。人和狗似乎在互动,狗的前爪搭在人的手上。阳光从画面的右侧照射过来,给整个场景增添了一种温暖的氛围。Node.js

import OpenAI from "openai";

const openai = new OpenAI({

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

});

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.6-plus", // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages: [

{

role: "user",

content: [{

type: "image_url",

image_url: {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

}

},

{

type: "text",

text: "图中描绘的是什么景象?"

}

]

}

]

});

console.log(response.choices[0].message.content);

}

main()返回结果

这是一张在海滩上拍摄的照片。照片中,一个人和一只狗坐在沙滩上,背景是大海和天空。人和狗似乎在互动,狗的前爪搭在人的手上。阳光从画面的右侧照射过来,给整个场景增添了一种温暖的氛围。curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl --location 'https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{"type": "image_url", "image_url": {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"}},

{"type": "text", "text": "图中描绘的是什么景象?"}

]

}]

}'返回结果

{

"choices": [

{

"message": {

"content": "这是一张在海滩上拍摄的照片。照片中,一个人和一只狗坐在沙滩上,背景是大海和天空。人和狗似乎在互动,狗的前爪搭在人的手上。阳光从画面的右侧照射过来,给整个场景增添了一种温暖的氛围。",

"role": "assistant"

},

"finish_reason": "stop",

"index": 0,

"logprobs": null

}

],

"object": "chat.completion",

"usage": {

"prompt_tokens": 1270,

"completion_tokens": 54,

"total_tokens": 1324

},

"created": 1725948561,

"system_fingerprint": null,

"model": "qwen3.6-plus",

"id": "chatcmpl-0fd66f46-b09e-9164-a84f-3ebbbedbac15"

}DashScope

Python

import os

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

messages = [

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"text": "图中描绘的是什么景象?"}]

}]

response = dashscope.MultiModalConversation.call(

# 若没有配置环境变量, 请用百炼API Key将下行替换为: api_key ="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key = os.getenv('DASHSCOPE_API_KEY'),

model = 'qwen3.6-plus', # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages = messages

)

print(response.output.choices[0].message.content[0]["text"])返回结果

是一张在海滩上拍摄的照片。照片中有一位女士和一只狗。女士坐在沙滩上,微笑着与狗互动。狗戴着项圈,似乎在与女士握手。背景是大海和天空,阳光洒在她们身上,营造出温馨的氛围。Java

import java.util.Arrays;

import java.util.Collections;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.JsonUtils;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"),

Collections.singletonMap("text", "图中描绘的是什么景象?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus") // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}返回结果

这是一张在海滩上拍摄的照片。照片中有一个穿着格子衬衫的人和一只戴着项圈的狗。人和狗面对面坐着,似乎在互动。背景是大海和天空,阳光洒在他们身上,营造出温暖的氛围。curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"text": "图中描绘的是什么景象?"}

]

}

]

}

}'返回结果

{

"output": {

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"text": "这是一张在海滩上拍摄的照片。照片中有一个穿着格子衬衫的人和一只戴着项圈的狗。他们坐在沙滩上,背景是大海和天空。阳光从画面的右侧照射过来,给整个场景增添了一种温暖的氛围。"

}

]

}

}

]

},

"usage": {

"output_tokens": 55,

"input_tokens": 1271,

"image_tokens": 1247

},

"request_id": "ccf845a3-dc33-9cda-b581-20fe7dc23f70"

}模型选型

Qwen3.6:最新一代视觉理解模型,相比Qwen3.5,在代码开发、泛化推理、多模态能力(万物识别、OCR、物体定位)等方面显著增强。

qwen3.6-plus:性能最强,推荐优先使用。qwen3.6-flash:速度更快,成本更低。qwen3.6-35b-a3b:Qwen3.6 开源系列。

Qwen3.5:在多模态推理、2D/3D图像理解、复杂文档解析、视觉编程、视频理解、多模态智能体等任务上领先。

qwen3.5-plus:千问性能最强的视觉理解模型,推荐优先使用。qwen3.5-flash:速度更快,成本更低,是兼顾性能与成本的高性价比选择,适用于对响应速度敏感的场景。qwen3.5-397b-a17b、qwen3.5-122b-a10b、qwen3.5-27b、qwen3.5-35b-a3b:Qwen3.5 开源系列模型。

Qwen3-VL 也适用于高精度的物体识别与定位(包括 3D 定位)、 Agent 工具调用、文档和网页解析、复杂题目解答、长视频理解等任务。系列内模型对比如下:

qwen3-vl-plus:Qwen3-VL 系列中性能最强的模型。qwen3-vl-flash:速度更快,成本更低,是兼顾性能与成本的高性价比选择,适用于对响应速度敏感的场景。

Qwen2.5-VL 适用于简单的图像描述、短视频摘要提取等通用任务,系列内模型对比如下:

qwen-vl-max(属于Qwen2.5-VL):Qwen2.5-VL 系列中效果最佳的模型。qwen-vl-plus(属于Qwen2.5-VL):速度更快,在效果与成本之间实现良好平衡。

模型的名称、上下文、价格、快照版本等信息请参见百炼控制台;并发限流条件请参考限流。

模型效果

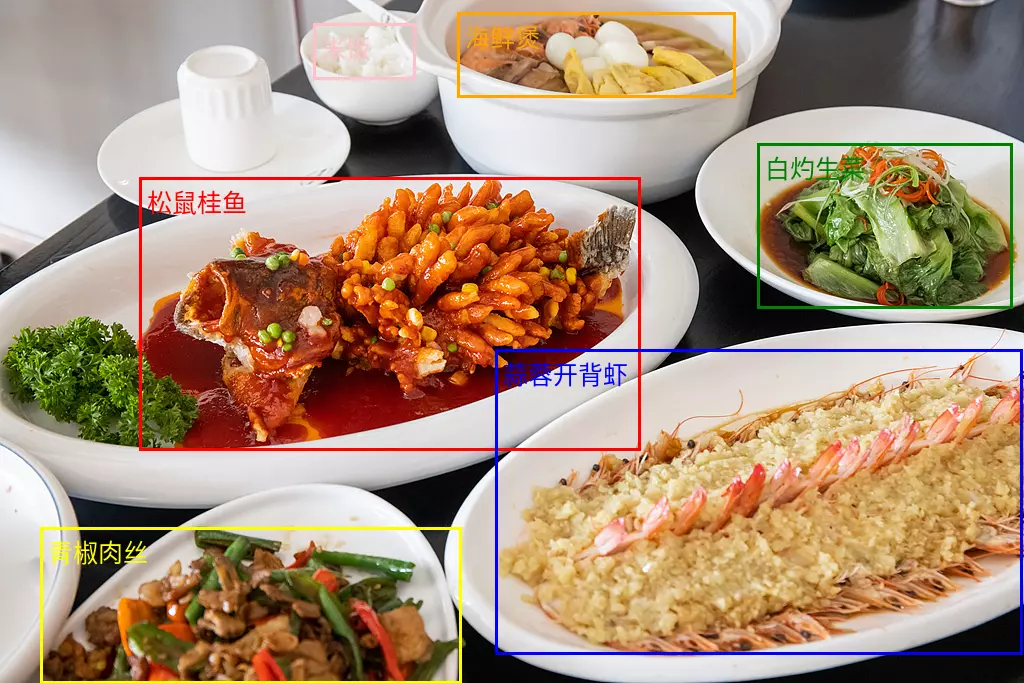

图像问答

描述图像中的内容或者对其进行分类打标,如识别人物、地点、动植物等。

如果太阳很刺眼,我应该用这张图中的什么物品? | 当太阳很刺眼时,你应该使用图中的粉色太阳镜。太阳镜可以有效阻挡强光、减少紫外线对眼睛的伤害,帮助你在阳光强烈时保护视力并提升视觉舒适度。 |

创意写作

根据图片或视频内容生成生动的文字描述,适用于故事创作、文案撰写、短视频脚本等创意场景。

请根据图片内容,帮我写一段有意思的朋友圈文案。 | 好的,这张图片充满了浓郁的中秋节日氛围,古典与现代元素结合得非常巧妙。根据图片中的主要元素,我为你准备了几个不同风格的朋友圈文案,你可以根据自己的喜好选择。 诗意唯美风 今夜月明人尽望,不知秋思落谁家。嫦娥奔月,玉兔捣药,古人的浪漫在今夜被点亮。愿这轮明月,能照亮你回家的路,也能寄去我最深的思念。中秋节快乐! 温馨祝福风 月圆人团圆,中秋夜最温柔。看烟花绽放,赏圆月当空,吃一口月饼,道一声安康。愿你我心中所念,皆能如愿以偿。祝大家中秋快乐,阖家幸福! |

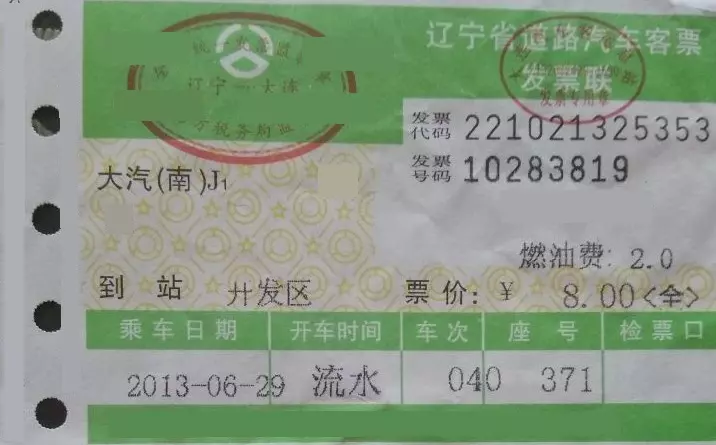

文字识别与信息抽取

识别图像中的文字、公式或抽取票据、证件、表单中的信息,支持格式化输出文本;支持的语言可参见模型特性对比。

提取图中的:['发票代码','发票号码','到站','燃油费','票价','乘车日期','开车时间','车次','座号'],请你以JSON格式输出。 | { "发票代码": "221021325353", "发票号码": "10283819", "到站": "开发区", "燃油费": "2.0", "票价": "8.00<全>", "乘车日期": "2013-06-29", "开车时间": "流水", "车次": "040", "座号": "371" } |

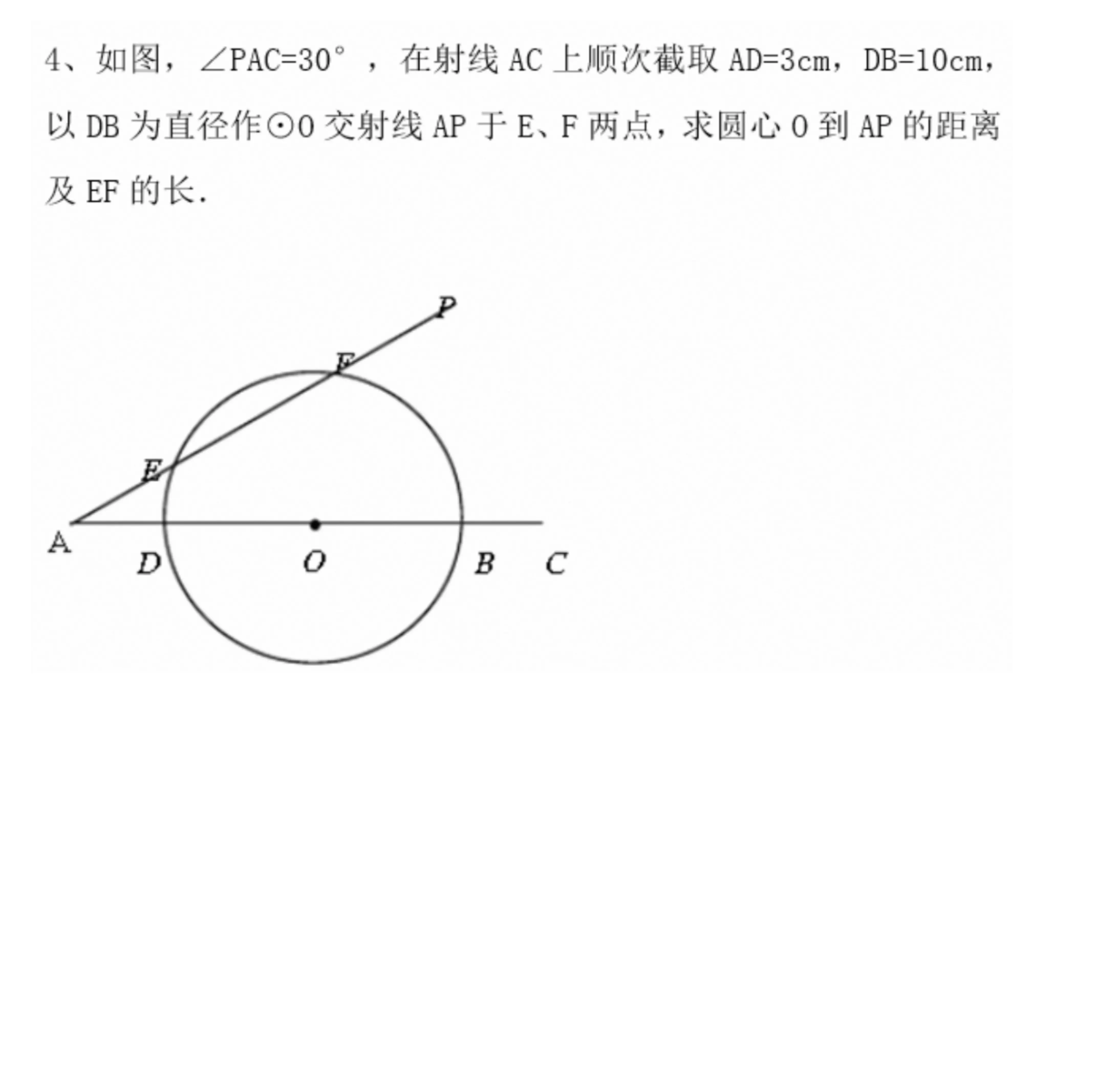

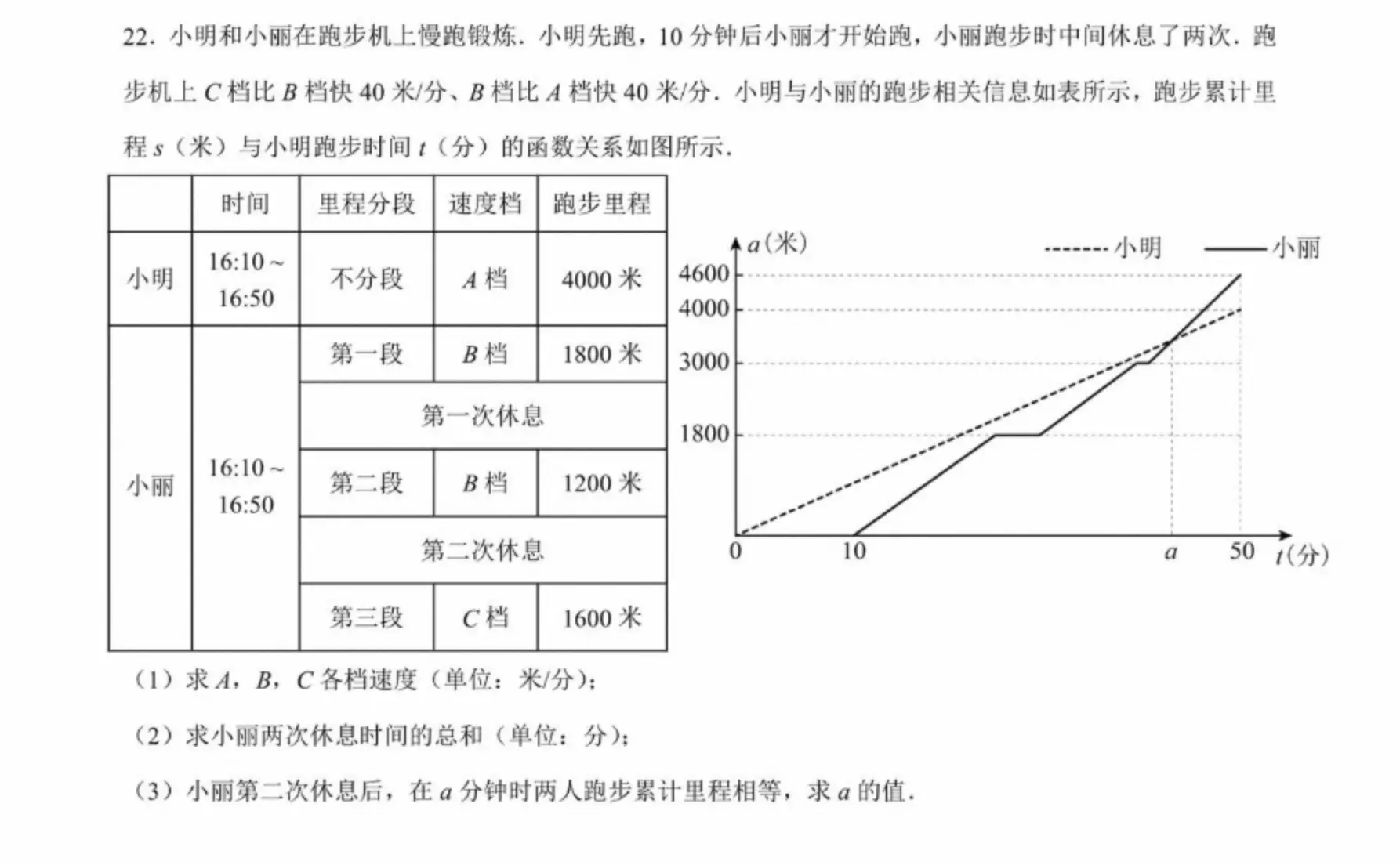

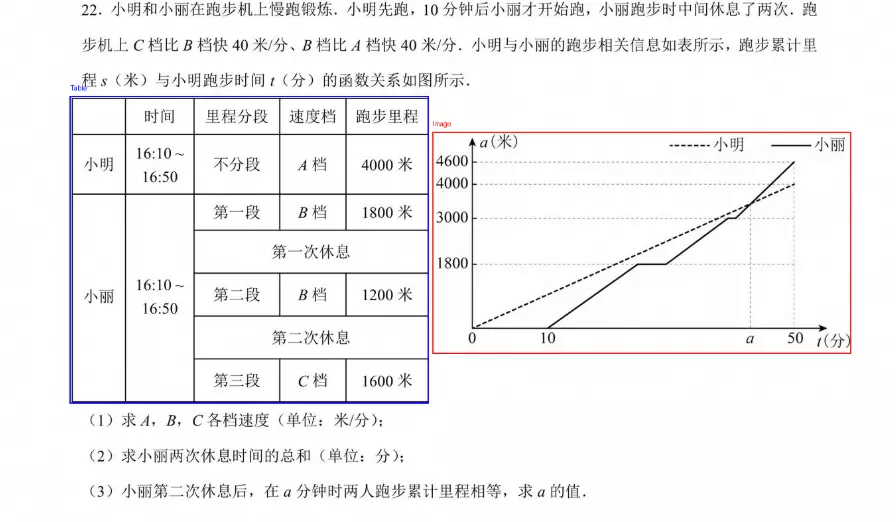

多学科题目解答

解答图像中的数学、物理、化学等问题,适用于中小学、大学以及成人教育阶段。

请你分步骤解答图中的数学题。 |

|

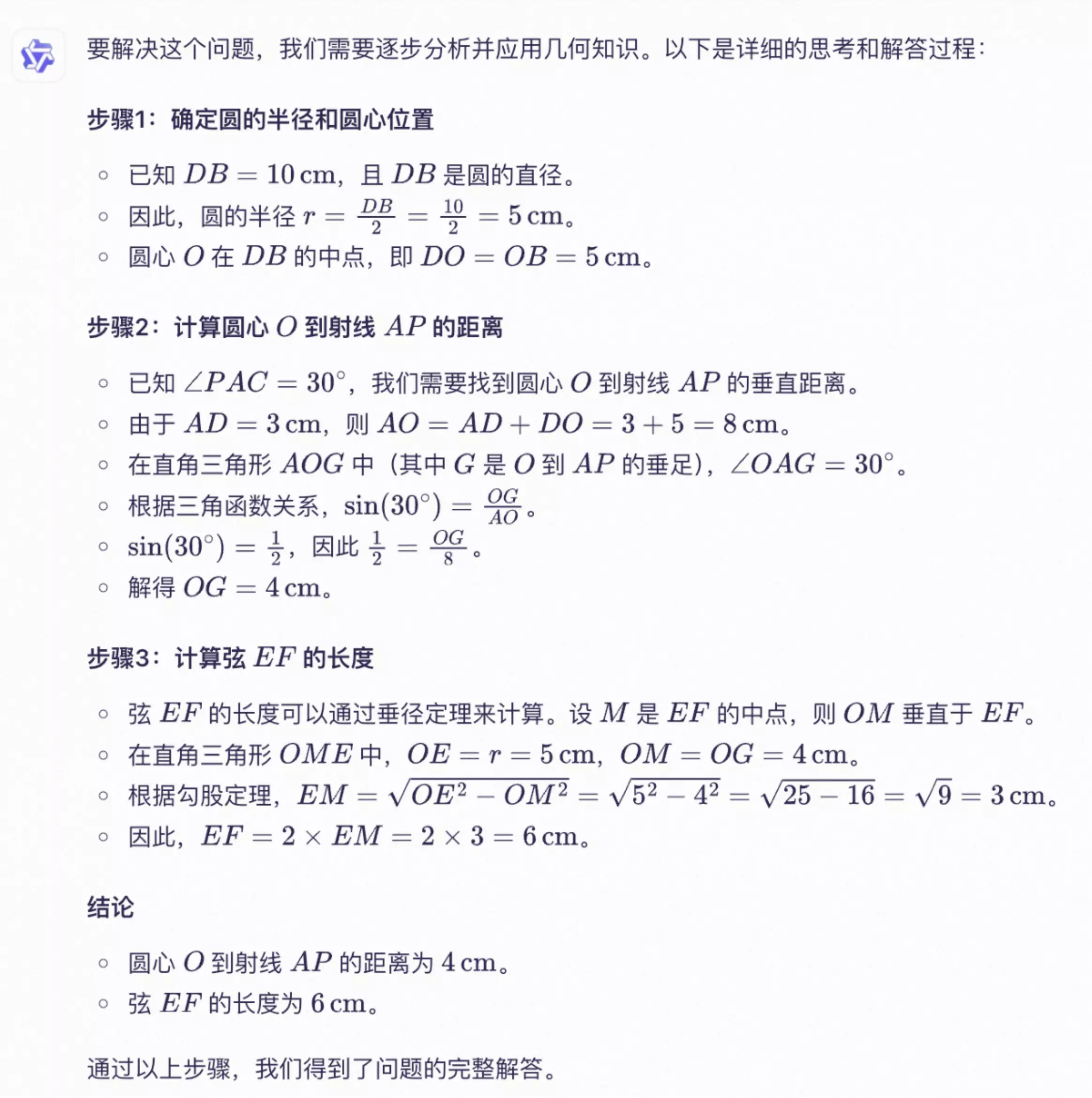

视觉编程

可通过图像或视频生成代码,可用于将设计图、网站截图等生成HTML、CSS、JS 代码。

根据我的草图设计使用HTML、CSS创建网页,主色调为黑色。 |

网页预览效果 |

物体定位

支持二维和三维定位,可用于判断物体方位、视角变化、遮挡关系。三维定位为Qwen3-VL模型新增能力。

Qwen2.5-VL模型 480*480 ~ 2560*2560 分辨率范围内,物体定位效果较为鲁棒,在此范围之外检测精度可能会下降(偶发检测框漂移现象)。

如需将定位结果绘制到原图可参见常见问题。

二维定位

| 可视化展示二维定位效果

|

文档解析

将图像类的文档(如扫描件/图片PDF)解析为 QwenVL HTML 或 QwenVL Markdown 格式,该格式不仅能精准识别文本,还能获取图像、表格等元素的位置信息。Qwen3-VL模型新增解析为 Markdown 格式的能力。

推荐提示词如下:qwenvl html(解析为HTML格式)或qwenvl markdown(解析为Markdown格式)

qwenvl markdown。 |

可视化展示效果 |

视频理解

分析视频内容,如对具体事件进行定位并获取时间戳、生成关键时间段的摘要等。

请你描述下视频中的人物的一系列动作,以JSON格式输出开始时间(start_time)、结束时间(end_time)、事件(event),请使用HH:mm:ss表示 时间戳。 | { "events": [ { "start_time": "00:00:00", "end_time": "00:00:05", "event": "人物手持一个纸箱走向桌子,并将纸箱放在桌上。" }, { "start_time": "00:00:05", "end_time": "00:00:15", "event": "人物拿起扫描枪,对准纸箱上的标签进行扫描。" }, { "start_time": "00:00:15", "end_time": "00:00:21", "event": "人物将扫描枪放回原位,然后拿起笔在笔记本上记录信息。"}] } |

核心能力

开启/关闭思考模式

qwen3.6、qwen3.5、qwen3-vl-plus、qwen3-vl-flash系列模型属于混合思考模型,模型可以在思考后回复,也可直接回复;通过enable_thinking参数控制是否开启思考模式:true:开启思考模式。qwen3.6、qwen3.5系列模型默认为true。false:关闭思考模式。qwen3-vl-plus、qwen3-vl-flash系列模型默认为false。

qwen3-vl-235b-a22b-thinking等带thinking后缀的属于仅思考模型,模型总会在回复前进行思考,且无法关闭。

模型配置:在非 Agent 工具调用的通用对话场景下,为保持最佳效果,建议不设置

System Message,可将模型角色设定、输出格式要求等指令通过User Message传入。优先使用流式输出: 开启思考模式时,支持流式和非流式两种输出方式。为避免因响应内容过长导致超时,建议优先使用流式输出方式。

限制思考长度:深度思考模型有时会输出冗长的推理过程,可使用

thinking_budget参数限制思考过程的长度。若模型思考过程生成的 Token 数超过thinking_budget,推理内容会进行截断并立刻开始生成最终回复内容。thinking_budget默认值为模型的最大思维链长度,请参见模型列表。

OpenAI 兼容

enable_thinking非 OpenAI 标准参数,若使用 OpenAI Python SDK 请通过 extra_body传入。

from openai import OpenAI

import os

# 初始化OpenAI客户端

client = OpenAI(

# 若没有配置环境变量,请用阿里云百炼API Key将下行替换为:api_key="sk-xxx",

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

reasoning_content = "" # 定义完整思考过程

answer_content = "" # 定义完整回复

is_answering = False # 判断是否结束思考过程并开始回复

enable_thinking = True

# 创建聊天完成请求

completion = client.chat.completions.create(

model="qwen3.6-plus",

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"

},

},

{"type": "text", "text": "这道题怎么解答?"},

],

},

],

stream=True,

# enable_thinking 参数开启思考过程,thinking_budget 参数设置最大推理过程 Token 数

# 通过enable_thinking参数切换思考模式

extra_body={

'enable_thinking': enable_thinking,

"thinking_budget": 81920},

# 解除以下注释会在最后一个chunk返回Token使用量

# stream_options={

# "include_usage": True

# }

)

if enable_thinking:

print("\n" + "=" * 20 + "思考过程" + "=" * 20 + "\n")

for chunk in completion:

# 如果chunk.choices为空,则打印usage

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

else:

delta = chunk.choices[0].delta

# 打印思考过程

if hasattr(delta, 'reasoning_content') and delta.reasoning_content is not None:

print(delta.reasoning_content, end='', flush=True)

reasoning_content += delta.reasoning_content

else:

# 开始回复

if delta.content != "" and is_answering is False:

print("\n" + "=" * 20 + "完整回复" + "=" * 20 + "\n")

is_answering = True

# 打印回复过程

print(delta.content, end='', flush=True)

answer_content += delta.content

# print("=" * 20 + "完整思考过程" + "=" * 20 + "\n")

# print(reasoning_content)

# print("=" * 20 + "完整回复" + "=" * 20 + "\n")

# print(answer_content)import OpenAI from "openai";

// 初始化 openai 客户端

const openai = new OpenAI({

// 若没有配置环境变量,请用阿里云百炼API Key将下行替换为:api_key="sk-xxx",

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: 'https://dashscope.aliyuncs.com/compatible-mode/v1'

});

let reasoningContent = '';

let answerContent = '';

let isAnswering = false;

let enableThinking = true;

let messages = [

{

role: "user",

content: [

{ type: "image_url", image_url: { "url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg" } },

{ type: "text", text: "解答这道题" },

]

}]

async function main() {

try {

const stream = await openai.chat.completions.create({

model: 'qwen3.6-plus',

messages: messages,

stream: true,

// 注意:在 Node.js SDK,enableThinking 这样的非标准参数作为顶层属性传递的,无需放在 extra_body 中

enable_thinking: enableThinking,

thinking_budget: 81920

});

if (enableThinking){console.log('\n' + '='.repeat(20) + '思考过程' + '='.repeat(20) + '\n');}

for await (const chunk of stream) {

if (!chunk.choices?.length) {

console.log('\nUsage:');

console.log(chunk.usage);

continue;

}

const delta = chunk.choices[0].delta;

// 处理思考过程

if (delta.reasoning_content) {

process.stdout.write(delta.reasoning_content);

reasoningContent += delta.reasoning_content;

}

// 处理正式回复

else if (delta.content) {

if (!isAnswering) {

console.log('\n' + '='.repeat(20) + '完整回复' + '='.repeat(20) + '\n');

isAnswering = true;

}

process.stdout.write(delta.content);

answerContent += delta.content;

}

}

} catch (error) {

console.error('Error:', error);

}

}

main();# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl --location 'https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"

}

},

{

"type": "text",

"text": "请解答这道题"

}

]

}

],

"stream":true,

"stream_options":{"include_usage":true},

"enable_thinking": true,

"thinking_budget": 81920

}'DashScope

import os

import dashscope

from dashscope import MultiModalConversation

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

enable_thinking=True

messages = [

{

"role": "user",

"content": [

{"image": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"},

{"text": "解答这道题?"}

]

}

]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx",

api_key=os.getenv('DASHSCOPE_API_KEY'),

model="qwen3.6-plus",

messages=messages,

stream=True,

# enable_thinking 参数开启思考过程

# 通过enable_thinking参数切换思考模式

enable_thinking=enable_thinking,

# thinking_budget 参数设置最大推理过程 Token 数

thinking_budget=81920,

)

# 定义完整思考过程

reasoning_content = ""

# 定义完整回复

answer_content = ""

# 判断是否结束思考过程并开始回复

is_answering = False

if enable_thinking:

print("=" * 20 + "思考过程" + "=" * 20)

for chunk in response:

# 如果思考过程与回复皆为空,则忽略

message = chunk.output.choices[0].message

reasoning_content_chunk = message.get("reasoning_content", None)

if (chunk.output.choices[0].message.content == [] and

reasoning_content_chunk == ""):

pass

else:

# 如果当前为思考过程

if reasoning_content_chunk is not None and chunk.output.choices[0].message.content == []:

print(chunk.output.choices[0].message.reasoning_content, end="")

reasoning_content += chunk.output.choices[0].message.reasoning_content

# 如果当前为回复

elif chunk.output.choices[0].message.content != []:

if not is_answering:

print("\n" + "=" * 20 + "完整回复" + "=" * 20)

is_answering = True

print(chunk.output.choices[0].message.content[0]["text"], end="")

answer_content += chunk.output.choices[0].message.content[0]["text"]

# 如果您需要打印完整思考过程与完整回复,请将以下代码解除注释后运行

# print("=" * 20 + "完整思考过程" + "=" * 20 + "\n")

# print(f"{reasoning_content}")

# print("=" * 20 + "完整回复" + "=" * 20 + "\n")

# print(f"{answer_content}")// dashscope SDK的版本 >= 2.21.10

import java.util.*;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import io.reactivex.Flowable;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.exception.InputRequiredException;

import java.lang.System;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

private static final Logger logger = LoggerFactory.getLogger(Main.class);

private static StringBuilder reasoningContent = new StringBuilder();

private static StringBuilder finalContent = new StringBuilder();

private static boolean isFirstPrint = true;

private static void handleGenerationResult(MultiModalConversationResult message) {

String re = message.getOutput().getChoices().get(0).getMessage().getReasoningContent();

String reasoning = Objects.isNull(re)?"":re; // 默认值

List<Map<String, Object>> content = message.getOutput().getChoices().get(0).getMessage().getContent();

if (!reasoning.isEmpty()) {

reasoningContent.append(reasoning);

if (isFirstPrint) {

System.out.println("====================思考过程====================");

isFirstPrint = false;

}

System.out.print(reasoning);

}

if (Objects.nonNull(content) && !content.isEmpty()) {

Object text = content.get(0).get("text");

finalContent.append(content.get(0).get("text"));

if (!isFirstPrint) {

System.out.println("\n====================完整回复====================");

isFirstPrint = true;

}

System.out.print(text);

}

}

public static MultiModalConversationParam buildMultiModalConversationParam(MultiModalMessage Msg) {

return MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus")

.messages(Arrays.asList(Msg))

.enableThinking(true)

.thinkingBudget(81920)

.incrementalOutput(true)

.build();

}

public static void streamCallWithMessage(MultiModalConversation conv, MultiModalMessage Msg)

throws NoApiKeyException, ApiException, InputRequiredException, UploadFileException {

MultiModalConversationParam param = buildMultiModalConversationParam(Msg);

Flowable<MultiModalConversationResult> result = conv.streamCall(param);

result.blockingForEach(message -> {

handleGenerationResult(message);

});

}

public static void main(String[] args) {

try {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMsg = MultiModalMessage.builder()

.role(Role.USER.getValue())

.content(Arrays.asList(Collections.singletonMap("image", "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"),

Collections.singletonMap("text", "请解答这道题")))

.build();

streamCallWithMessage(conv, userMsg);

// 打印最终结果

// if (reasoningContent.length() > 0) {

// System.out.println("\n====================完整回复====================");

// System.out.println(finalContent.toString());

// }

} catch (ApiException | NoApiKeyException | UploadFileException | InputRequiredException e) {

logger.error("An exception occurred: {}", e.getMessage());

}

System.exit(0);

}

}# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-H 'X-DashScope-SSE: enable' \

-d '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://img.alicdn.com/imgextra/i1/O1CN01gDEY8M1W114Hi3XcN_!!6000000002727-0-tps-1024-406.jpg"},

{"text": "请解答这道题"}

]

}

]

},

"parameters":{

"enable_thinking": true,

"incremental_output": true,

"thinking_budget": 81920

}

}'多图像输入

视觉理解 模型支持在单次请求中传入多张图片,可用于商品对比、多页文档处理等任务。实现时只需在user message 的content数组中包含多个图片对象即可。

图片数量受模型图文总 Token 上限的限制,所有图片和文本的总 Token 数必须小于模型的最大输入。

OpenAI兼容

Python

import os

from openai import OpenAI

client = OpenAI(

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=[

{"role": "user","content": [

{"type": "image_url","image_url": {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},},

{"type": "image_url","image_url": {"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},},

{"type": "text", "text": "这些图描绘了什么内容?"},

],

}

],

)

print(completion.choices[0].message.content)返回结果

图1中是一位女士和一只拉布拉多犬在海滩上互动的场景。女士穿着格子衬衫,坐在沙滩上,与狗进行握手的动作,背景是海浪和天空,整个画面充满了温馨和愉快的氛围。

图2中是一只老虎在森林中行走的场景。老虎的毛色是橙色和黑色条纹相间,它正向前迈步,周围是茂密的树木和植被,地面上覆盖着落叶,整个画面给人一种野生自然的感觉。Node.js

import OpenAI from "openai";

const openai = new OpenAI(

{

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

}

);

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.6-plus", // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages: [

{role: "user",content: [

{type: "image_url",image_url: {"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"}},

{type: "image_url",image_url: {"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"}},

{type: "text", text: "这些图描绘了什么内容?" },

]}]

});

console.log(response.choices[0].message.content);

}

main()返回结果

第一张图片中,一个人和一只狗在海滩上互动。人穿着格子衬衫,狗戴着项圈,他们似乎在握手或击掌。

第二张图片中,一只老虎在森林中行走。老虎的毛色是橙色和黑色条纹,背景是绿色的树木和植被。curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"

}

},

{

"type": "image_url",

"image_url": {

"url": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"

}

},

{

"type": "text",

"text": "这些图描绘了什么内容?"

}

]

}

]

}'返回结果

{

"choices": [

{

"message": {

"content": "图1中是一位女士和一只拉布拉多犬在海滩上互动的场景。女士穿着格子衬衫,坐在沙滩上,与狗进行握手的动作,背景是海景和日落的天空,整个画面显得非常温馨和谐。\n\n图2中是一只老虎在森林中行走的场景。老虎的毛色是橙色和黑色条纹相间,它正向前迈步,周围是茂密的树木和植被,地面上覆盖着落叶,整个画面充满了自然的野性和生机。",

"role": "assistant"

},

"finish_reason": "stop",

"index": 0,

"logprobs": null

}

],

"object": "chat.completion",

"usage": {

"prompt_tokens": 2497,

"completion_tokens": 109,

"total_tokens": 2606

},

"created": 1725948561,

"system_fingerprint": null,

"model": "qwen3.6-plus",

"id": "chatcmpl-0fd66f46-b09e-9164-a84f-3ebbbedbac15"

}DashScope

Python

import os

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

messages = [

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"image": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},

{"text": "这些图描绘了什么内容?"}

]

}

]

response = dashscope.MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus', # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])返回结果

这些图片展示了一些动物和自然场景。第一张图片中,一个人和一只狗在海滩上互动。第二张图片是一只老虎在森林中行走Java

import java.util.Arrays;

import java.util.Collections;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import java.util.HashMap;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"),

Collections.singletonMap("image", "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"),

Collections.singletonMap("text", "这些图描绘了什么内容?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus") // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text")); }

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}返回结果

这些图片展示了一些动物和自然场景。

1. 第一张图片:一个女人和一只狗在海滩上互动。女人穿着格子衬衫,坐在沙滩上,狗戴着项圈,伸出爪子与女人握手。

2. 第二张图片:一只老虎在森林中行走。老虎的毛色是橙色和黑色条纹,背景是树木和树叶。curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl --location 'https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241022/emyrja/dog_and_girl.jpeg"},

{"image": "https://dashscope.oss-cn-beijing.aliyuncs.com/images/tiger.png"},

{"text": "这些图展现了什么内容?"}

]

}

]

}

}'返回结果

{

"output": {

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"text": "这些图片展示了一些动物和自然场景。第一张图片中,一个人和一只狗在海滩上互动。第二张图片是一只老虎在森林中行走。"

}

]

}

}

]

},

"usage": {

"output_tokens": 81,

"input_tokens": 1277,

"image_tokens": 2497

},

"request_id": "ccf845a3-dc33-9cda-b581-20fe7dc23f70"

}视频理解

视觉理解模型支持对视频内容进行理解,文件形式包括图像列表(视频帧)或视频文件。以下是理解在线视频或图像列表(通过URL指定)的示例代码。关于视频限制或可传入的图像列表数量限制,请参见视频限制章节。

建议使用性能较优的最新版或近期快照版模型理解视频文件。

视频文件

视觉理解模型通过从视频中提取帧序列进行内容分析。您可以通过以下两个参数控制抽帧策略:

fps:控制抽帧频率,每隔

秒抽取一帧。取值范围为 [0.1, 10],默认值为 2.0。 高速运动场景:建议设置较高的 fps 值,以捕捉更多细节

静态或长视频:建议设置较低的 fps 值,以提高处理效率

max_frames:限制视频抽取帧的上限。当按 fps 计算的总帧数超过此限制时,系统将自动在 max_frames 内均匀抽帧。此参数仅在使用 DashScope SDK 时可用。

OpenAI兼容

使用OpenAI SDK或HTTP方式向视觉理解模型直接输入视频文件时,需要将用户消息中的"type"参数设为"video_url"。

Python

import os

from openai import OpenAI

client = OpenAI(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx",

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus",

messages=[

{

"role": "user",

"content": [

# 直接传入视频文件时,请将type的值设置为video_url

{

"type": "video_url",

"video_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps": 2

},

{

"type": "text",

"text": "这段视频的内容是什么?"

}

]

}

]

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

const openai = new OpenAI({

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

});

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.6-plus",

messages: [

{

role: "user",

content: [

// 直接传入视频文件时,请将type的值设置为video_url

{

type: "video_url",

video_url: {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps": 2

},

{

type: "text",

text: "这段视频的内容是什么?"

}

]

}

]

});

console.log(response.choices[0].message.content);

}

main();curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{

"type": "video_url",

"video_url": {

"url": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4"

},

"fps":2

},

{

"type": "text",

"text": "这段视频的内容是什么?"

}

]

}

]

}'DashScope

Python

import dashscope

import os

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

messages = [

{"role": "user",

"content": [

# fps 可参数控制视频抽帧频率,表示每隔 1/fps 秒抽取一帧,完整用法请参见:https://help.aliyun.com/zh/model-studio/use-qwen-by-calling-api?#2ed5ee7377fum

{"video": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4","fps":2},

{"text": "这段视频的内容是什么?"}

]

}

]

response = dashscope.MultiModalConversation.call(

# 若没有配置环境变量, 请用百炼API Key将下行替换为: api_key ="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus',

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Java

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Map;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.JsonUtils;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

public static void simpleMultiModalConversationCall()

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

// fps 可参数控制视频抽帧频率,表示每隔 1/fps 秒抽取一帧,完整用法请参见:https://help.aliyun.com/zh/model-studio/use-qwen-by-calling-api?#2ed5ee7377fum

Map<String, Object> params = new HashMap<>();

params.put("video", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4");

params.put("fps", 2);

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

params,

Collections.singletonMap("text", "这段视频的内容是什么?"))).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

simpleMultiModalConversationCall();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{"role": "user","content": [{"video": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241115/cqqkru/1.mp4","fps":2},

{"text": "这段视频的内容是什么?"}]}]}

}'图像列表

当视频以图像列表(即预先抽取的视频帧)传入时,可通过fps参数告知模型视频帧之间的时间间隔,这能帮助模型更准确地理解事件的顺序、持续时间和动态变化。模型支持通过 fps 参数指定原始视频的抽帧率,表示视频帧是每隔

OpenAI兼容

使用OpenAI SDK或HTTP方式向视觉理解模型输入图片列表形式的视频时,需要将用户消息中的"type"参数设为"video"。

Python

import os

from openai import OpenAI

client = OpenAI(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx",

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=[{"role": "user","content": [

# 传入图像列表时,用户消息中的"type"参数为"video"

{"type": "video","video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"type": "text","text": "描述这个视频的具体过程"},

]}]

)

print(completion.choices[0].message.content)Node.js

// 确保之前在 package.json 中指定了 "type": "module"

import OpenAI from "openai";

const openai = new OpenAI({

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx",

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

});

async function main() {

const response = await openai.chat.completions.create({

model: "qwen3.6-plus", // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages: [{

role: "user",

content: [

{

// 传入图像列表时,用户消息中的"type"参数为"video"

type: "video",

video: [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2

},

{

type: "text",

text: "描述这个视频的具体过程"

}

]

}]

});

console.log(response.choices[0].message.content);

}

main();curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"messages": [{"role": "user","content": [{"type": "video","video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"type": "text","text": "描述这个视频的具体过程"}]}]

}'DashScope

Python

import os

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

messages = [{"role": "user",

"content": [

# 传入图像列表时,fps 参数适用于Qwen3.6、Qwen3-VL 和 Qwen2.5-VL系列模型

{"video":["https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"],

"fps":2},

{"text": "描述这个视频的具体过程"}]}]

response = dashscope.MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx",

api_key=os.getenv("DASHSCOPE_API_KEY"),

model='qwen3.6-plus', # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=messages

)

print(response.output.choices[0].message.content[0]["text"])Java

// DashScope SDK版本需要不低于2.21.10

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Map;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

private static final String MODEL_NAME = "qwen3.6-plus"; // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

public static void videoImageListSample() throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

// 传入图像列表时,fps 参数适用于Qwen3.6、Qwen3-VL 和 Qwen2.5-VL系列模型

Map<String, Object> params = new HashMap<>();

params.put("video", Arrays.asList("https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"));

params.put("fps", 2);

MultiModalMessage userMessage = MultiModalMessage.builder()

.role(Role.USER.getValue())

.content(Arrays.asList(

params,

Collections.singletonMap("text", "描述这个视频的具体过程")))

.build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model(MODEL_NAME)

.messages(Arrays.asList(userMessage)).build();

MultiModalConversationResult result = conv.call(param);

System.out.print(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

videoImageListSample();

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"input": {

"messages": [

{

"role": "user",

"content": [

{

"video": [

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/xzsgiz/football1.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/tdescd/football2.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/zefdja/football3.jpg",

"https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20241108/aedbqh/football4.jpg"

],

"fps":2

},

{

"text": "描述这个视频的具体过程"

}

]

}

]

}

}'传入本地文件(Base64 编码或文件路径)

视觉理解模型提供两种本地文件上传方式:Base64 编码上传和文件路径直接上传。可根据文件大小、SDK类型选择上传方式,具体建议请参见如何选择文件上传方式;两种方式均需满足图像限制中对文件的要求。

Base64 编码上传

将文件转换为 Base64 编码字符串,再传入模型。适用于 OpenAI 和 DashScope SDK 及 HTTP 方式

文件路径上传

直接向模型传入本地文件路径。仅 DashScope Python 和 Java SDK 支持,不支持 DashScope HTTP 和OpenAI 兼容方式。

请您参考下表,结合您的编程语言与操作系统指定文件的路径。

图像

文件路径传入

Python

import os

from dashscope import MultiModalConversation

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

# 将xxx/eagle.png替换为你本地图像的绝对路径

local_path = "xxx/eagle.png"

image_path = f"file://{local_path}"

messages = [

{'role':'user',

'content': [{'image': image_path},

{'text': '图中描绘的是什么景象?'}]}]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus', # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=messages)

print(response.output.choices[0].message.content[0]["text"])Java

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

public static void callWithLocalFile(String localPath)

throws ApiException, NoApiKeyException, UploadFileException {

String filePath = "file://"+localPath;

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(new HashMap<String, Object>(){{put("image", filePath);}},

new HashMap<String, Object>(){{put("text", "图中描绘的是什么景象?");}})).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus") // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));}

public static void main(String[] args) {

try {

// 将xxx/eagle.png替换为你本地图像的绝对路径

callWithLocalFile("xxx/eagle.png");

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Base64 编码传入

OpenAI兼容

Python

from openai import OpenAI

import os

import base64

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

# 将xxxx/eagle.png替换为你本地图像的绝对路径

base64_image = encode_image("xxx/eagle.png")

client = OpenAI(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=[

{

"role": "user",

"content": [

{

"type": "image_url",

# 需要注意,传入Base64,图像格式(即image/{format})需要与支持的图片列表中的Content Type保持一致。"f"是字符串格式化的方法。

# PNG图像: f"data:image/png;base64,{base64_image}"

# JPEG图像: f"data:image/jpeg;base64,{base64_image}"

# WEBP图像: f"data:image/webp;base64,{base64_image}"

"image_url": {"url": f"data:image/png;base64,{base64_image}"},

},

{"type": "text", "text": "图中描绘的是什么景象?"},

],

}

],

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

import { readFileSync } from 'fs';

const openai = new OpenAI(

{

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

}

);

const encodeImage = (imagePath) => {

const imageFile = readFileSync(imagePath);

return imageFile.toString('base64');

};

// 将xxx/eagle.png替换为你本地图像的绝对路径

const base64Image = encodeImage("xxx/eagle.png")

async function main() {

const completion = await openai.chat.completions.create({

model: "qwen3.6-plus", // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages: [

{"role": "user",

"content": [{"type": "image_url",

// 需要注意,传入Base64,图像格式(即image/{format})需要与支持的图片列表中的Content Type保持一致。

// PNG图像: data:image/png;base64,${base64Image}

// JPEG图像: data:image/jpeg;base64,${base64Image}

// WEBP图像: data:image/webp;base64,${base64Image}

"image_url": {"url": `data:image/png;base64,${base64Image}`},},

{"type": "text", "text": "图中描绘的是什么景象?"}]}]

});

console.log(completion.choices[0].message.content);

}

main();curl

将文件转换为 Base64 编码的字符串的方法可参见示例代码;

为了便于展示,代码中的

"data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",该Base64 编码字符串是截断的。在实际使用中,请务必传入完整的编码字符串。

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl --location 'https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{"type": "image_url", "image_url": {"url": "data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA..."}},

{"type": "text", "text": "图中描绘的是什么景象?"}

]

}]

}'DashScope

Python

import base64

import os

import dashscope

from dashscope import MultiModalConversation

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

# 将xxxx/eagle.png替换为你本地图像的绝对路径

base64_image = encode_image("xxxx/eagle.png")

messages = [

{

"role": "user",

"content": [

# 需要注意,传入Base64,图像格式(即image/{format})需要与支持的图片列表中的Content Type保持一致。"f"是字符串格式化的方法。

# PNG图像: f"data:image/png;base64,{base64_image}"

# JPEG图像: f"data:image/jpeg;base64,{base64_image}"

# WEBP图像: f"data:image/webp;base64,{base64_image}"

{"image": f"data:image/png;base64,{base64_image}"},

{"text": "图中描绘的是什么景象?"},

],

},

]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

api_key=os.getenv("DASHSCOPE_API_KEY"),

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=messages,

)

print(response.output.choices[0].message.content[0]["text"])Java

import java.io.IOException;

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Base64;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import com.alibaba.dashscope.aigc.multimodalconversation.*;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

private static String encodeImageToBase64(String imagePath) throws IOException {

Path path = Paths.get(imagePath);

byte[] imageBytes = Files.readAllBytes(path);

return Base64.getEncoder().encodeToString(imageBytes);

}

public static void callWithLocalFile(String localPath) throws ApiException, NoApiKeyException, UploadFileException, IOException {

String base64Image = encodeImageToBase64(localPath); // Base64编码

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

new HashMap<String, Object>() {{ put("image", "data:image/png;base64," + base64Image); }},

new HashMap<String, Object>() {{ put("text", "图中描绘的是什么景象?"); }}

)).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

// 将 xxx/eagle.png 替换为你本地图像的绝对路径

callWithLocalFile("xxx/eagle.png");

} catch (ApiException | NoApiKeyException | UploadFileException | IOException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

将文件转换为 Base64 编码的字符串的方法可参见示例代码;

为了便于展示,代码中的

"data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",该Base64 编码字符串是截断的。在实际使用中,请务必传入完整的编码字符串。

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"image": "data:image/jpeg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA..."},

{"text": "图中描绘的是什么景象?"}

]

}

]

}

}'视频文件

以保存在本地的test.mp4为例。

文件路径传入

Python

import os

from dashscope import MultiModalConversation

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

# 将xxxx/test.mp4替换为你本地视频的绝对路径

local_path = "xxx/test.mp4"

video_path = f"file://{local_path}"

messages = [

{'role':'user',

# fps参数控制视频抽帧数量,表示每隔1/fps 秒抽取一帧

'content': [{'video': video_path,"fps":2},

{'text': '这段视频描绘的是什么景象?'}]}]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus',

messages=messages)

print(response.output.choices[0].message.content[0]["text"])Java

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

public static void callWithLocalFile(String localPath)

throws ApiException, NoApiKeyException, UploadFileException {

String filePath = "file://"+localPath;

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(new HashMap<String, Object>()

{{

put("video", filePath);// fps参数控制视频抽帧数量,表示每隔1/fps 秒抽取一帧

put("fps", 2);

}},

new HashMap<String, Object>(){{put("text", "这段视频描绘的是什么景象?");}})).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));}

public static void main(String[] args) {

try {

// 将xxxx/test.mp4替换为你本地视频的绝对路径

callWithLocalFile("xxx/test.mp4");

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Base64 编码传入

OpenAI兼容

Python

from openai import OpenAI

import os

import base64

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_video(video_path):

with open(video_path, "rb") as video_file:

return base64.b64encode(video_file.read()).decode("utf-8")

# 将xxxx/test.mp4替换为你本地视频的绝对路径

base64_video = encode_video("xxx/test.mp4")

client = OpenAI(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus",

messages=[

{

"role": "user",

"content": [

{

# 直接传入视频文件时,请将type的值设置为video_url

"type": "video_url",

"video_url": {"url": f"data:video/mp4;base64,{base64_video}"},

"fps":2

},

{"type": "text", "text": "这段视频描绘的是什么景象?"},

],

}

],

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

import { readFileSync } from 'fs';

const openai = new OpenAI(

{

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

}

);

const encodeVideo = (videoPath) => {

const videoFile = readFileSync(videoPath);

return videoFile.toString('base64');

};

// 将xxxx/test.mp4替换为你本地视频的绝对路径

const base64Video = encodeVideo("xxx/test.mp4")

async function main() {

const completion = await openai.chat.completions.create({

model: "qwen3.6-plus",

messages: [

{"role": "user",

"content": [{

// 直接传入视频文件时,请将type的值设置为video_url

"type": "video_url",

"video_url": {"url": `data:video/mp4;base64,${base64Video}`},

"fps":2},

{"type": "text", "text": "这段视频描绘的是什么景象?"}]}]

});

console.log(completion.choices[0].message.content);

}

main();

curl

将文件转换为 Base64 编码的字符串的方法可参见示例代码;

为了便于展示,代码中的

"data:video/mp4;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",该Base64 编码字符串是截断的。在实际使用中,请务必传入完整的编码字符串。

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl --location 'https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions' \

--header "Authorization: Bearer $DASHSCOPE_API_KEY" \

--header 'Content-Type: application/json' \

--data '{

"model": "qwen3.6-plus",

"messages": [

{

"role": "user",

"content": [

{"type": "video_url", "video_url": {"url": "data:video/mp4;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA..."},"fps":2},

{"type": "text", "text": "图中描绘的是什么景象?"}

]

}]

}'DashScope

Python

import base64

import os

import dashscope

from dashscope import MultiModalConversation

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_video(video_path):

with open(video_path, "rb") as video_file:

return base64.b64encode(video_file.read()).decode("utf-8")

# 将xxxx/test.mp4替换为你本地视频的绝对路径

base64_video = encode_video("xxxx/test.mp4")

messages = [{'role':'user',

# fps参数控制视频抽帧数量,表示每隔1/fps 秒抽取一帧

'content': [{'video': f"data:video/mp4;base64,{base64_video}","fps":2},

{'text': '这段视频描绘的是什么景象?'}]}]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus',

messages=messages)

print(response.output.choices[0].message.content[0]["text"])Java

import java.io.IOException;

import java.util.*;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import com.alibaba.dashscope.aigc.multimodalconversation.*;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

private static String encodeVideoToBase64(String videoPath) throws IOException {

Path path = Paths.get(videoPath);

byte[] videoBytes = Files.readAllBytes(path);

return Base64.getEncoder().encodeToString(videoBytes);

}

public static void callWithLocalFile(String localPath)

throws ApiException, NoApiKeyException, UploadFileException, IOException {

String base64Video = encodeVideoToBase64(localPath); // Base64编码

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(new HashMap<String, Object>()

{{

put("video", "data:video/mp4;base64," + base64Video);// fps参数控制视频抽帧数量,表示每隔1/fps 秒抽取一帧

put("fps", 2);

}},

new HashMap<String, Object>(){{put("text", "这段视频描绘的是什么景象?");}})).build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 若没有配置环境变量,请用百炼API Key将下行替换为:.apiKey("sk-xxx")

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model("qwen3.6-plus")

.messages(Arrays.asList(userMessage))

.build();

MultiModalConversationResult result = conv.call(param);

System.out.println(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

// 将 xxx/test.mp4 替换为你本地视频的绝对路径

callWithLocalFile("xxx/test.mp4");

} catch (ApiException | NoApiKeyException | UploadFileException | IOException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}curl

将文件转换为 Base64 编码的字符串的方法可参见示例代码;

为了便于展示,代码中的

"f"data:video/mp4;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",该Base64 编码字符串是截断的。在实际使用中,请务必传入完整的编码字符串。

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"input":{

"messages":[

{

"role": "user",

"content": [

{"video": "data:video/mp4;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA..."},

{"text": "这段视频描绘的是什么景象? "}

]

}

]

}

}'图像列表

以保存在本地的football1.jpg、football2.jpg、football3.jpg、football4.jpg为例。

文件路径传入

Python

import os

from dashscope import MultiModalConversation

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

local_path1 = "football1.jpg"

local_path2 = "football2.jpg"

local_path3 = "football3.jpg"

local_path4 = "football4.jpg"

image_path1 = f"file://{local_path1}"

image_path2 = f"file://{local_path2}"

image_path3 = f"file://{local_path3}"

image_path4 = f"file://{local_path4}"

messages = [{'role':'user',

# 传入图像列表时,fps 参数适用于Qwen3.6、Qwen3-VL 和 Qwen2.5-VL系列模型

'content': [{'video': [image_path1,image_path2,image_path3,image_path4],"fps":2},

{'text': '这段视频描绘的是什么景象?'}]}]

response = MultiModalConversation.call(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx"

api_key=os.getenv('DASHSCOPE_API_KEY'),

model='qwen3.6-plus', # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=messages)

print(response.output.choices[0].message.content[0]["text"])Java

// DashScope SDK版本需要不低于2.21.10

import java.util.Arrays;

import java.util.Map;

import java.util.Collections;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.Constants;

public class Main {

// 各地域配置不同,请根据实际地域修改

static {Constants.baseHttpApiUrl="https://dashscope.aliyuncs.com/api/v1";}

private static final String MODEL_NAME = "qwen3.6-plus"; // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

public static void videoImageListSample(String localPath1, String localPath2, String localPath3, String localPath4)

throws ApiException, NoApiKeyException, UploadFileException {

MultiModalConversation conv = new MultiModalConversation();

String filePath1 = "file://" + localPath1;

String filePath2 = "file://" + localPath2;

String filePath3 = "file://" + localPath3;

String filePath4 = "file://" + localPath4;

Map<String, Object> params = new HashMap<>();

params.put("video", Arrays.asList(filePath1,filePath2,filePath3,filePath4));

// 传入图像列表时,fps 参数适用于Qwen3.6、Qwen3-VL 和 Qwen2.5-VL系列模型

params.put("fps", 2);

MultiModalMessage userMessage = MultiModalMessage.builder()

.role(Role.USER.getValue())

.content(Arrays.asList(params,

Collections.singletonMap("text", "描述这个视频的具体过程")))

.build();

MultiModalConversationParam param = MultiModalConversationParam.builder()

// 各地域的API Key不同。获取API Key:https://www.alibabacloud.com/help/zh/model-studio/get-api-key

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model(MODEL_NAME)

.messages(Arrays.asList(userMessage)).build();

MultiModalConversationResult result = conv.call(param);

System.out.print(result.getOutput().getChoices().get(0).getMessage().getContent().get(0).get("text"));

}

public static void main(String[] args) {

try {

videoImageListSample(

"xxx/football1.jpg",

"xxx/football2.jpg",

"xxx/football3.jpg",

"xxx/football4.jpg");

} catch (ApiException | NoApiKeyException | UploadFileException e) {

System.out.println(e.getMessage());

}

System.exit(0);

}

}Base64 编码传入

OpenAI兼容

Python

import os

from openai import OpenAI

import base64

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

base64_image1 = encode_image("football1.jpg")

base64_image2 = encode_image("football2.jpg")

base64_image3 = encode_image("football3.jpg")

base64_image4 = encode_image("football4.jpg")

client = OpenAI(

# 若没有配置环境变量,请用百炼API Key将下行替换为:api_key="sk-xxx",

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

api_key=os.getenv("DASHSCOPE_API_KEY"),

# 各地域配置不同,请根据实际地域修改

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

)

completion = client.chat.completions.create(

model="qwen3.6-plus", # 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages=[

{"role": "user","content": [

{"type": "video","video": [

f"data:image/jpeg;base64,{base64_image1}",

f"data:image/jpeg;base64,{base64_image2}",

f"data:image/jpeg;base64,{base64_image3}",

f"data:image/jpeg;base64,{base64_image4}",]},

{"type": "text","text": "描述这个视频的具体过程"},

]}]

)

print(completion.choices[0].message.content)Node.js

import OpenAI from "openai";

import { readFileSync } from 'fs';

const openai = new OpenAI(

{

// 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

// 若没有配置环境变量,请用百炼API Key将下行替换为:apiKey: "sk-xxx"

apiKey: process.env.DASHSCOPE_API_KEY,

// 各地域配置不同,请根据实际地域修改

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

}

);

const encodeImage = (imagePath) => {

const imageFile = readFileSync(imagePath);

return imageFile.toString('base64');

};

const base64Image1 = encodeImage("football1.jpg")

const base64Image2 = encodeImage("football2.jpg")

const base64Image3 = encodeImage("football3.jpg")

const base64Image4 = encodeImage("football4.jpg")

async function main() {

const completion = await openai.chat.completions.create({

model: "qwen3.6-plus", // 此处以qwen3.6-plus为例,可按需更换模型名称。模型列表:https://help.aliyun.com/zh/model-studio/models

messages: [

{"role": "user",

"content": [{"type": "video",

"video": [

`data:image/jpeg;base64,${base64Image1}`,

`data:image/jpeg;base64,${base64Image2}`,

`data:image/jpeg;base64,${base64Image3}`,

`data:image/jpeg;base64,${base64Image4}`]},

{"type": "text", "text": "这段视频描绘的是什么景象?"}]}]

});

console.log(completion.choices[0].message.content);

}

main();curl

将文件转换为 Base64 编码的字符串的方法可参见示例代码;

为了便于展示,代码中的

"data:image/jpg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",该Base64 编码字符串是截断的。在实际使用中,请务必传入完整的编码字符串。

# ======= 重要提示 =======

# 各地域的API Key不同。获取API Key:https://help.aliyun.com/zh/model-studio/get-api-key

# 各地域配置不同,请根据实际地域修改

# === 执行时请删除该注释 ===

curl -X POST https://dashscope.aliyuncs.com/compatible-mode/v1/chat/completions \

-H "Authorization: Bearer $DASHSCOPE_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "qwen3.6-plus",

"messages": [{"role": "user",

"content": [{"type": "video",

"video": [

"data:image/jpeg;base64,/9j/4AAQSkZJRgABAQAAAQABAAD/2wBDAA...",

"data:image/jpeg;base64,nEpp6jpnP57MoWSyOWwrkXMJhHRCWYeFYb...",

"data:image/jpeg;base64,JHWQnJPc40GwQ7zERAtRMK6iIhnWw4080s...",

"data:image/jpeg;base64,adB6QOU5HP7dAYBBOg/Fb7KIptlbyEOu58..."

]},

{"type": "text",

"text": "描述这个视频的具体过程"}]}]

}'DashScope

Python

import base64

import os

from dashscope import MultiModalConversation

import dashscope

# 各地域配置不同,请根据实际地域修改

dashscope.base_http_api_url = "https://dashscope.aliyuncs.com/api/v1"

# 编码函数: 将本地文件转换为 Base64 编码的字符串

def encode_image(image_path):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

base64_image1 = encode_image("football1.jpg")

base64_image2 = encode_image("football2.jpg")

base64_image3 = encode_image("football3.jpg")

base64_image4 = encode_image("football4.jpg")

messages = [{'role':'user',

'content': [

{'video':

[f"data:image/jpeg;base64,{base64_image1}",

f"data:image/jpeg;base64,{base64_image2}",

f"data:image/jpeg;base64,{base64_image3}",

f"data:image/jpeg;base64,{base64_image4}"